Anthropic is experiencing rapid revenue expansion even as it faces a political and regulatory dispute with the U.S. government. The artificial intelligence company has increased its annualized revenue run rate to more than $19 billion, more than doubling from roughly $9 billion at the end of 2025.

The growth marks a sharp rise from around $14 billion reported only weeks earlier. The surge has been driven by strong adoption of Anthropic’s AI models and developer tools, particularly the programming-focused product Claude Code.

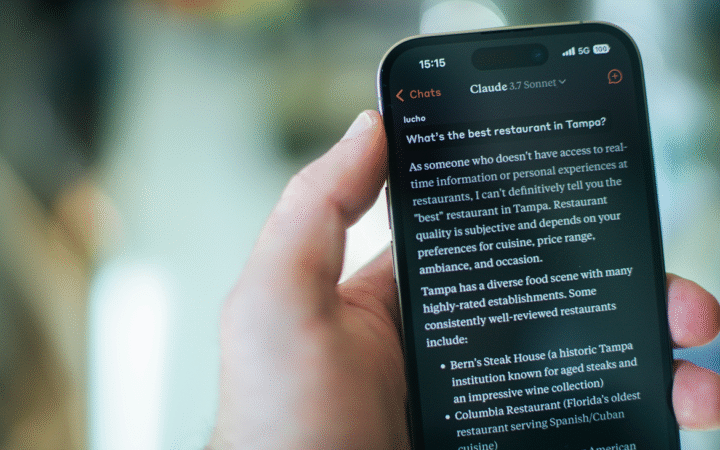

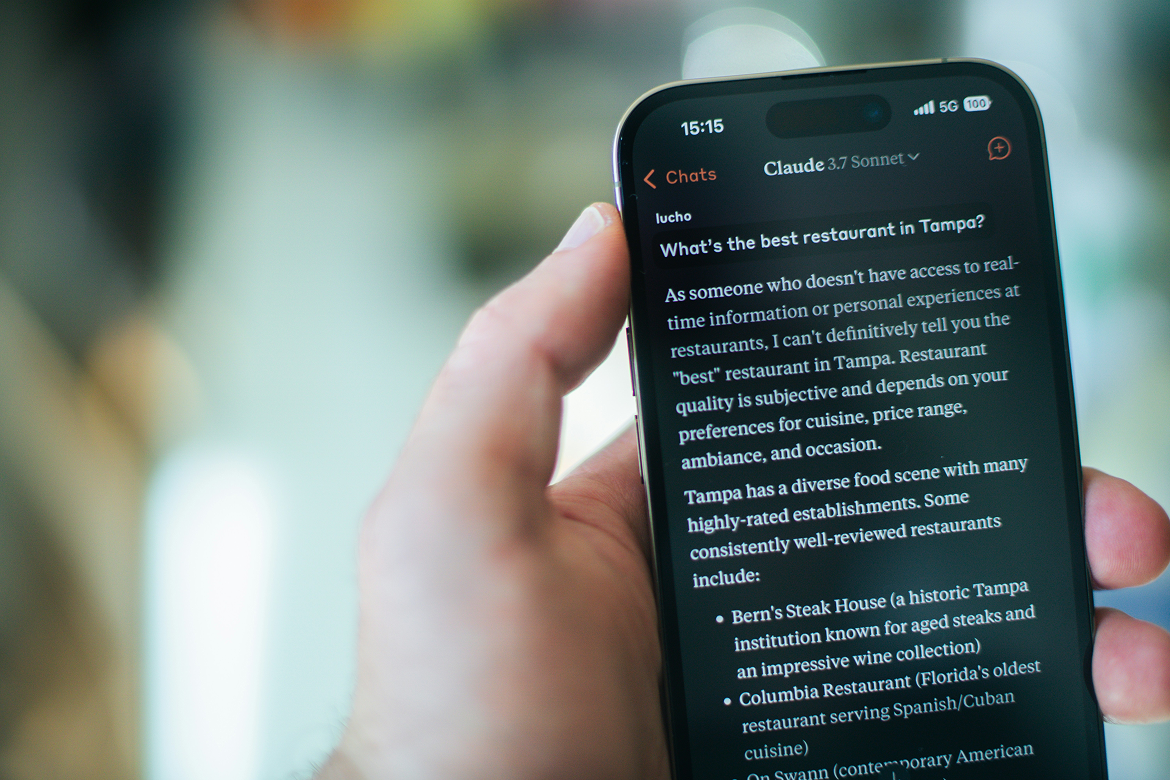

Anthropic, which was recently valued at about $380 billion, has also gained traction among individual users. Its Claude mobile app recently climbed to the top of Apple’s U.S. App Store rankings as debate intensified over AI partnerships with the Pentagon. The ranking shift came as some users reacted to rival OpenAI’s defense agreement by uninstalling ChatGPT and switching platforms.

The company has also expanded its product portfolio with tools such as Claude Cowork, which integrates AI into collaborative software workflows. The launch of these products has disrupted segments of the software-as-a-service market, contributing to volatility among some SaaS company stocks.

Pentagon Conflict and Supply Chain Classification

Despite strong commercial momentum, Anthropic is facing growing pressure from the U.S. Department of Defense. Defense Secretary Pete Hegseth recently designated the company as a supply chain risk, a classification typically reserved for firms linked to geopolitical adversaries.

The designation followed months of negotiations between Anthropic and the Pentagon over how its AI systems could be used by military and intelligence agencies.

Anthropic insisted on maintaining safeguards preventing two specific applications: mass domestic surveillance of U.S. citizens and the use of AI systems in fully autonomous weapons. The company has argued that current AI models are not reliable enough to safely operate without human oversight and that large-scale surveillance of Americans would violate fundamental rights.

U.S. defense officials have previously said the military has no intention of deploying AI for mass surveillance or autonomous weapons but has argued that lawful uses of AI should remain unrestricted.

Legal Challenge and Industry Implications

Anthropic has described the supply chain risk designation as legally unsound and signaled it will challenge the move in court if necessary. The company argues the authority cited by the Defense Department applies only to contracts directly involving the Pentagon and should not restrict broader commercial use of its technology.

Under the company’s interpretation, the designation would not affect private customers or contractors using Claude outside Department of Defense agreements. Anthropic also says it should not restrict how defense contractors deploy the model in non-Pentagon projects.

Industry observers say the dispute highlights tensions between AI safety policies and national security priorities as governments accelerate adoption of generative AI tools.

Despite the political standoff, Anthropic’s commercial business continues to expand rapidly. Strong enterprise demand for coding and productivity tools built on Claude has driven revenue growth while consumer adoption has surged, reflected in the app’s recent rise to the No. 1 position on the U.S. App Store amid backlash over competing defense AI partnerships. Meanwhile, OpenAI is considering a deal to deploy its AI models across NATO’s unclassified networks following its Pentagon agreement, underscoring how defense alliances are rapidly integrating generative AI technologies even as debates over safeguards and governance continue.