Google has introduced a new personalization feature for its Gemini AI assistant that allows users to connect their Google apps with a single tap. The feature, called Personal Intelligence, is rolling out in beta to eligible Google AI Pro and AI Ultra subscribers in the United States and is designed to make Gemini more context-aware, proactive, and useful in everyday tasks.

When enabled, Personal Intelligence allows Gemini to securely access data from apps such as Gmail, Google Photos, YouTube, and Search. Users choose whether to turn the feature on and which apps to connect, with the option to disable access at any time. Google said the setup is designed to be simple while giving users granular control over how their information is used.

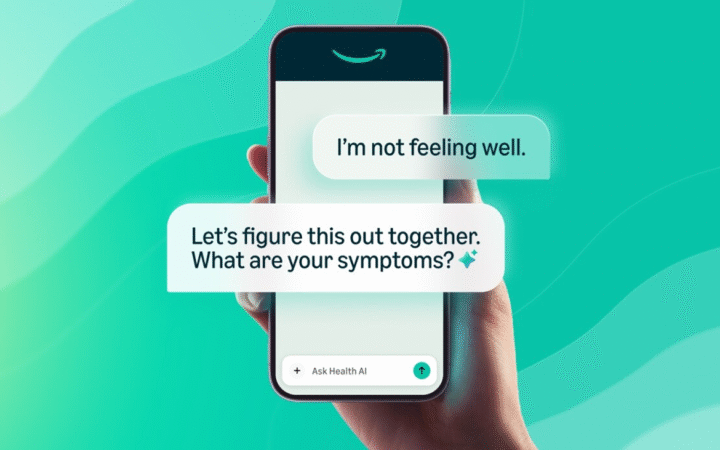

The feature is intended to help Gemini reason across multiple sources and retrieve specific details from personal content. Gemini can combine information from text, images, and video to answer questions or make suggestions tailored to an individual’s history and preferences. For example, Gemini can locate details from emails, identify information embedded in photos, and reference past activity to provide more relevant recommendations.

Privacy Controls and Safeguards

Google emphasized that privacy was a central design principle for Personal Intelligence. App connections are turned off by default, and Gemini only accesses linked data in response to user requests. The company said personal data stored in Gmail or Photos is not directly used to train its AI models. Instead, Gemini references that information to generate responses, while model training relies on filtered or obfuscated prompts and outputs.

Gemini also aims to provide transparency by explaining which sources it used to generate an answer. Users can ask follow-up questions to verify responses, regenerate replies without personalization, or use temporary chats that do not draw on connected apps. Guardrails are in place to limit proactive assumptions about sensitive topics, including health-related data, unless explicitly requested.

Google acknowledged that errors and over-personalization may still occur during the beta phase. The company encouraged users to provide feedback when responses feel inaccurate or make incorrect assumptions. Addressing nuance, such as changes in relationships or evolving interests, remains an active area of development.

Availability and Broader AI Strategy

Personal Intelligence works across web, Android, and iOS, and is compatible with all models available in the Gemini model picker. Google said it plans to expand availability to additional countries and eventually to the free tier. The feature is also expected to come to AI Mode in Search. At launch, it is limited to personal Google accounts and does not support Workspace enterprise or education users.

The update comes as Google’s Gemini models gain wider industry adoption, including a recent multi-year agreement under which Apple will use Gemini models and Google’s cloud infrastructure to power future AI features and upcoming Siri upgrades.