Anthropic has confirmed details of a forthcoming AI model after a security lapse exposed internal documents, revealing what the company describes as a significant advancement in its Claude family of systems.

The leak, caused by a configuration error in Anthropic’s content management system, made nearly 3,000 unpublished assets publicly accessible. The exposed data included draft blog posts, images, and internal PDFs. Security researchers identified the issue and alerted the company, which then restricted access.

Anthropic said the incident resulted from “human error” and described the materials as early drafts intended for future publication.

New Model Tier Above Opus

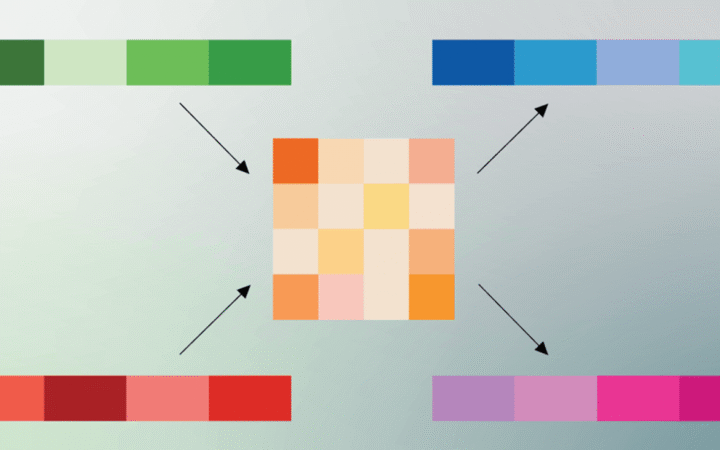

Among the leaked documents was information about a new model referred to as Claude Mythos, internally codenamed “Capybara.” The model is expected to introduce a new tier above Anthropic’s current lineup, which includes Opus, Sonnet, and Haiku.

According to the draft materials, the new system is designed to be more capable than the existing Opus models, particularly in areas such as coding, academic reasoning, and cybersecurity. Anthropic confirmed it is developing a next-generation general-purpose model and described it as a “step change” in capability.

The addition of a higher-tier model suggests Anthropic is continuing to scale its systems in response to growing competition in advanced AI, particularly in enterprise and technical domains.

Cybersecurity Concerns and Controlled Release

The leaked documents highlighted cybersecurity as a key area of focus for the new model. Anthropic reportedly considers its capabilities in this domain to be significantly ahead of existing systems, raising concerns about potential misuse.

To address these risks, the company plans to limit early access to organizations focused on cybersecurity defense. This approach is intended to allow institutions to strengthen protections before broader deployment.

Anthropic has previously taken steps to mitigate misuse of its models, including blocking attempts to use its tools for cybercrime. The enhanced capabilities described in the leaked materials indicate a growing emphasis on both offensive and defensive implications of AI systems.

Broader Implications and Internal Exposure

In addition to model details, the leak revealed plans for internal events, including an invite-only gathering for European business leaders. The exposure of such materials underscores the risks associated with managing sensitive information in rapidly evolving AI organizations.

The incident comes at a time when Anthropic is expanding its influence in the AI sector, with increased enterprise adoption and ongoing infrastructure investments. It also aligns with broader strategic developments, as the company is reportedly targeting an IPO as early as October while intensifying its enterprise push and scaling infrastructure to compete more directly with OpenAI.

While Anthropic has moved quickly to secure the exposed data, the leak provides an early look at its next-generation model strategy. It also illustrates how operational vulnerabilities can expose critical information in an industry where technological advances are closely watched.