Anthropic has identified industrial-scale attempts by three AI labs, DeepSeek, Moonshot, and MiniMax, to illicitly extract capabilities from Claude, Anthropic’s advanced AI system. As per the company post, these campaigns involved roughly 16 million exchanges across 24,000 fraudulent accounts, violating terms of service and regional access restrictions.

The labs used a technique called “distillation,” where a less capable model is trained on the outputs of a stronger model. While distillation is a legitimate AI training method, here it was exploited to shortcut development and acquire high-value capabilities at scale, including agentic reasoning, coding, and tool use.

National Security and Safety Risks

Illicitly distilled models often lack safeguards present in legitimate AI systems, increasing the risk of deployment in malicious or military contexts. These unprotected models could be incorporated into surveillance, disinformation campaigns, or offensive cyber operations. Anthropic emphasizes that distillation attacks undermine export controls and threaten the global competitive edge of U.S. AI research.

Details of the Campaigns

DeepSeek (~150,000 exchanges) focused on reasoning, reinforcement-learning-style grading, and generating censorship-safe alternatives for politically sensitive queries.

Moonshot (~3.4 million exchanges) targeted agentic reasoning, tool use, coding, computer-use agent development, and computer vision, using hundreds of accounts to evade detection.

MiniMax (~13 million exchanges) extracted agentic coding and orchestration capabilities. Anthropic detected the campaign while MiniMax was still actively training its model, giving unprecedented visibility into the full lifecycle of distillation attacks.

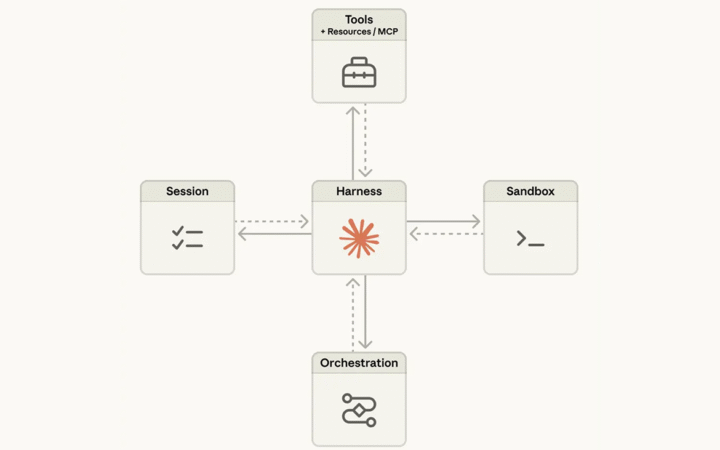

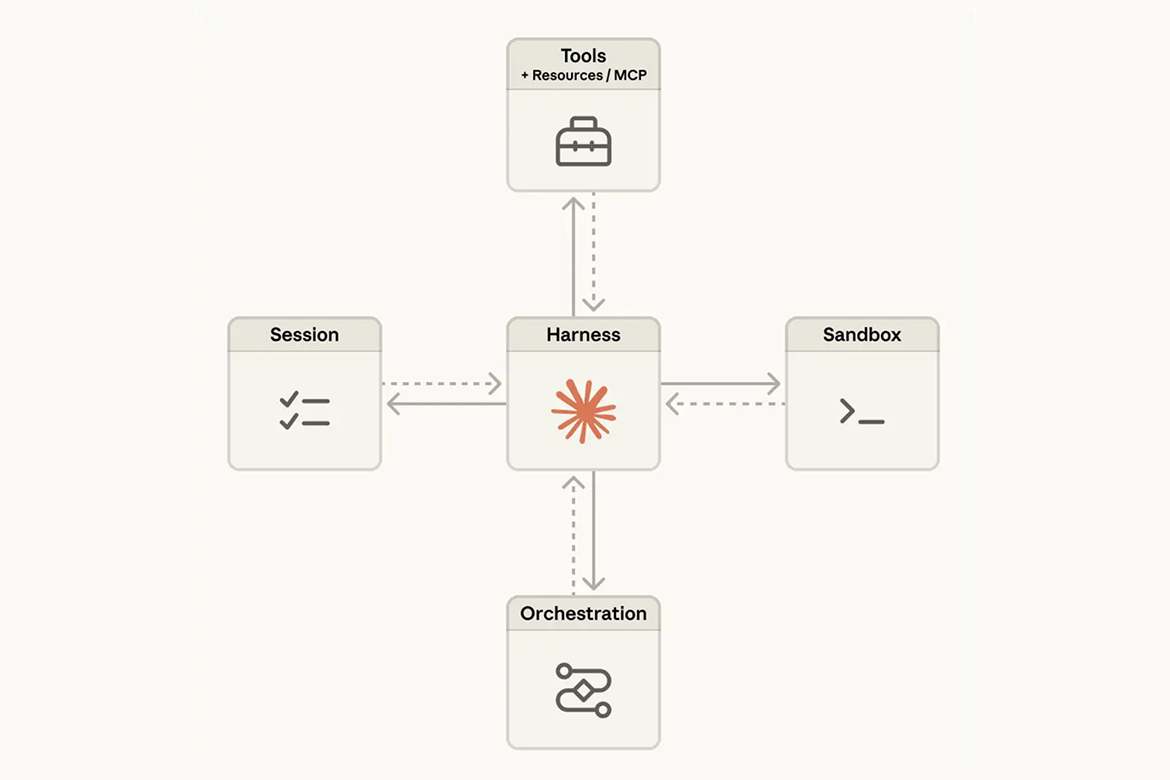

How Distillation Attacks Work

Labs circumvent regional restrictions using proxy services and “hydra cluster” architectures—networks of fraudulent accounts that distribute traffic across APIs and cloud platforms. Prompts are carefully crafted and repeated at massive scale to extract specific capabilities, creating training data for unauthorized models.

Anthropic’s Response

Anthropic is strengthening defenses through:

- Detection: Classifiers and behavioral fingerprinting systems to identify patterns of distillation.

- Intelligence sharing: Technical indicators shared with other AI labs, cloud providers, and authorities.

- Access controls: Enhanced verification for educational, research, and startup accounts.

- Countermeasures: Product, API, and model-level safeguards to reduce illicit distillation efficacy.

Anthropic calls for coordinated industry action, combining AI labs, cloud providers, and policymakers to counter the growing threat.

The disclosure underscores the critical importance of AI safety and secure access as frontier models become increasingly powerful and globally sought after.