Following a White House directive, the U.S. Departments of State, Treasury, and Health and Human Services have moved to cease using Anthropic’s AI products, including its Claude chatbot platform. The Pentagon had already begun transitioning to alternative providers such as OpenAI.

Treasury Secretary Scott Bessent confirmed on X that the department was terminating all use of Anthropic technology, while HHS notified employees to adopt platforms such as OpenAI’s ChatGPT and Google-backed Gemini. The State Department similarly announced it would switch its in-house chatbot, StateChat, to OpenAI’s GPT-4.1. A State Department spokesperson emphasized that these steps align with President Donald Trump’s directive to cancel Anthropic contracts and bring programs into full compliance.

William Pulte, director of the Federal Housing Finance Agency, said his bureau and affiliated agencies, including Fannie Mae and Freddie Mac, were also ending all use of Anthropic products.

National Security and Industry Implications

President Trump labeled Anthropic a supply-chain risk, a designation typically reserved for foreign suppliers deemed a potential threat. The move follows a standoff between Anthropic and the Pentagon over AI deployment safeguards. Sources indicate the dispute centered on preventing the U.S. military and intelligence agencies from using Anthropic’s AI for autonomous weapons targeting or domestic surveillance.

OpenAI, backed by Microsoft and Amazon, quickly moved to fill the gap. The company announced a deal to deploy AI models in the Defense Department’s classified networks. CEO Sam Altman later posted on X that OpenAI would amend the agreement to clarify that its technology would not be used to deliberately track or surveil U.S. persons or nationals, including through the acquisition of commercial data.

Transition Challenges and Broader Impact

The rapid agency transitions underscore the operational complexity of replacing AI tools deeply integrated into federal workflows. Claude’s prior use in sensitive military and intelligence tasks highlights the difficulty of enforcing swift cutoffs. Analysts note that the shifts also reflect broader tensions over how AI safety, ethics, and governance intersect with national security priorities.

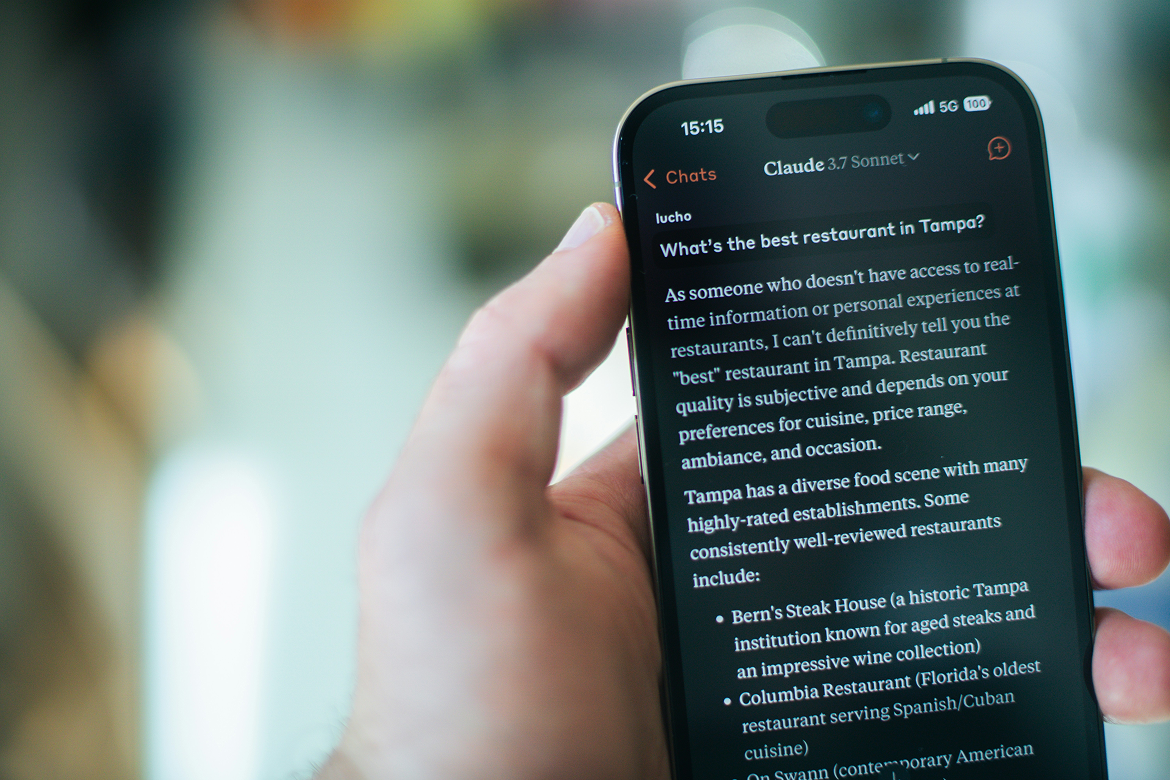

Meanwhile, Anthropic’s Claude has seen a surge in consumer adoption, rising to No. 1 on the U.S. App Store as public backlash grew against OpenAI’s Pentagon deal, highlighting a growing divergence between federal and retail users.