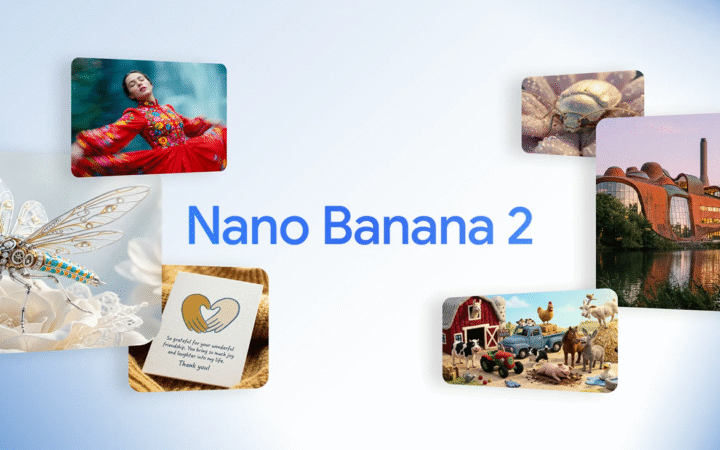

Google has released Gemini Embedding 2, its first fully multimodal embedding model built on the Gemini architecture. The model is now available in public preview through the Gemini API and Vertex AI platform.

The new system expands beyond traditional text embeddings by mapping multiple data types into a single vector space. Gemini Embedding 2 can process text, images, video, audio, and documents, enabling applications that require semantic understanding across different media formats.

Embeddings are numerical representations of content that allow AI systems to understand relationships between pieces of information. They are widely used in tasks such as semantic search, recommendation systems, clustering, and retrieval-augmented generation.

By integrating multiple modalities, the model allows developers to simplify pipelines that previously required separate systems for different types of data. According to Google, the unified embedding space can capture relationships between media types, improving the accuracy of multimodal search and analysis.

Multimodal Capabilities and Input Support

Gemini Embedding 2 supports a wide range of inputs across several formats. The model can process up to 8,192 tokens of text, six images per request in PNG or JPEG format, and up to 120 seconds of video in MP4 or MOV format.

The model can also ingest audio directly without requiring transcription and can embed PDF documents of up to six pages. Developers can combine multiple input types within a single request, such as pairing images with text prompts to generate richer semantic representations.

This multimodal capability allows AI systems to analyze complex datasets where information appears in different formats. For example, developers could use a photo or video clip as a search query to retrieve related media assets or documents.

Flexible Embedding Dimensions and Performance Gains

Like earlier Google embedding models, Gemini Embedding 2 uses Matryoshka Representation Learning, a technique that compresses information across nested vector dimensions. This allows developers to adjust embedding sizes depending on performance and storage requirements.

The model’s default vector size is 3,072 dimensions, though developers can scale outputs to smaller dimensions such as 1,536 or 768 for more efficient storage and processing.

Google said the model demonstrates improved performance across text, image, video, and speech tasks compared with previous embedding models. The company noted that the model’s multimodal capabilities could enable new applications in areas such as media search, sentiment analysis, and data clustering.

Early partners are already using the technology to build advanced search tools. Paramount Skydance reported that the model improved its ability to locate video assets based on text queries, allowing the system to identify visual expressions and retrieve related media more accurately.

Gemini Embedding 2 is now accessible through Google’s developer tools and can integrate with AI frameworks including LangChain, LlamaIndex, and vector databases such as Weaviate, Qdrant, and ChromaDB. Google said the model is designed to serve as a foundation for next-generation multimodal AI systems that work with diverse forms of data.

AI & Machine Learning, News