Palantir Technologies has announced a new sovereign AI operating system reference architecture developed in partnership with Nvidia. The system is designed to provide organizations with a turnkey AI data center environment that integrates hardware infrastructure, data management, and AI application deployment.

The platform, called the Palantir AI OS Reference Architecture (AIOS-RA), combines Nvidia’s AI hardware and software stack with Palantir’s enterprise data and AI platforms. The companies said the architecture delivers a production-ready system capable of running advanced AI workloads while maintaining strict data sovereignty and operational control.

The reference architecture is built on Nvidia’s Enterprise Reference Architectures and is optimized to run Palantir’s full software ecosystem. This includes the company’s Artificial Intelligence Platform (AIP), Foundry data platform, Apollo deployment system, Rubix security framework, and AIP Hub.

Full-Stack AI Data Center Design

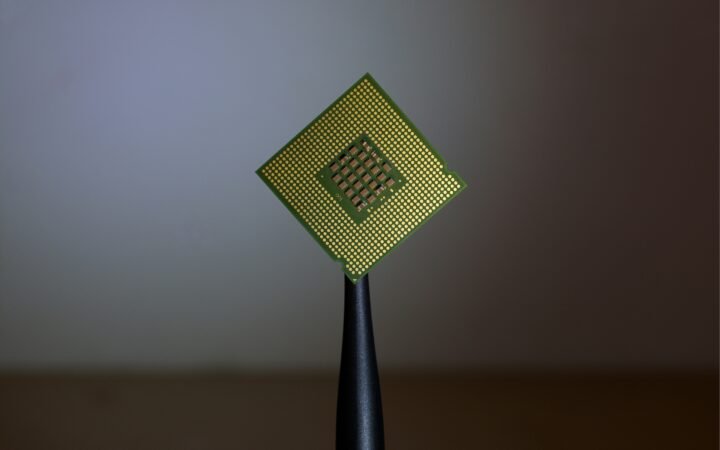

The system integrates Nvidia’s Blackwell Ultra AI infrastructure, including servers equipped with eight Blackwell Ultra GPUs and Spectrum-X Ethernet networking designed for AI training and inference workloads.

Alongside the hardware layer, the architecture includes a hardened Kubernetes-based compute environment for running Foundry services such as data cataloging, pipeline development, and analytics workloads.

Palantir’s Rubix platform provides a zero-trust security model for Kubernetes infrastructure, while the Apollo system automates deployment and lifecycle management across distributed environments.

The architecture also incorporates Nvidia’s AI Enterprise software stack, CUDA-X libraries, Nemotron open AI models, and Magnum IO data acceleration tools to improve training and inference performance.

Supporting Sovereign and Edge AI Deployments

The companies said the architecture is particularly suited for organizations operating in sensitive environments where data control and regulatory compliance are critical. These include government agencies, defense organizations, and industries with strict data residency requirements.

By enabling on-premise and edge deployments, the sovereign AI architecture allows organizations to run AI workloads locally without relying on public cloud infrastructure. This approach can also reduce latency for mission-critical applications and enable AI deployments in geographically distributed environments.

“From our first deployment with the United States government and in every deployment since, our software has had to meet the moment in the most complex and sensitive environments where customers must maintain control,” said Palantir Chief Architect Akshay Krishnaswamy.

Nvidia’s enterprise AI platforms team said the collaboration reflects growing demand for integrated AI infrastructure solutions.

“AI is redefining the infrastructure stack — demanding, latency-sensitive and data-sovereign environments require a full-stack architecture,” said Nvidia Vice President Justin Boitano.

The companies said the joint architecture is designed to help organizations convert large volumes of operational data into actionable intelligence while maintaining control over infrastructure, models, and applications.