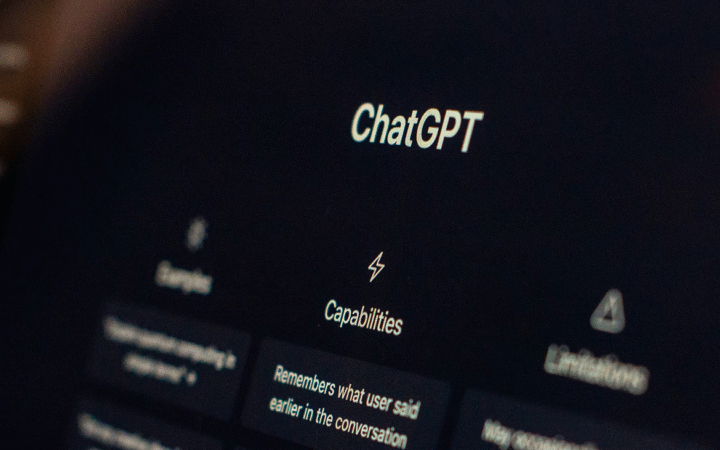

OpenAI is preparing to limit access to a new artificial intelligence model with advanced cybersecurity capabilities, signaling rising concern among AI developers about the risks of misuse. The model, still in development, is expected to be released only to a small group of vetted organizations, according to reports. The approach mirrors recent moves by Anthropic, which restricted access to its Mythos Preview model due to similar concerns about its ability to identify and exploit software vulnerabilities.

The shift reflects a broader turning point in AI development. Models are increasingly capable of autonomously analyzing code, discovering weaknesses, and even generating exploits. OpenAI has already begun testing controlled access through its “Trusted Access for Cyber” program, launched earlier this year alongside its GPT-5.3-Codex model. The initiative provides selected organizations with access to more advanced and less restricted systems for defensive cybersecurity work, backed by $10 million in API credits.

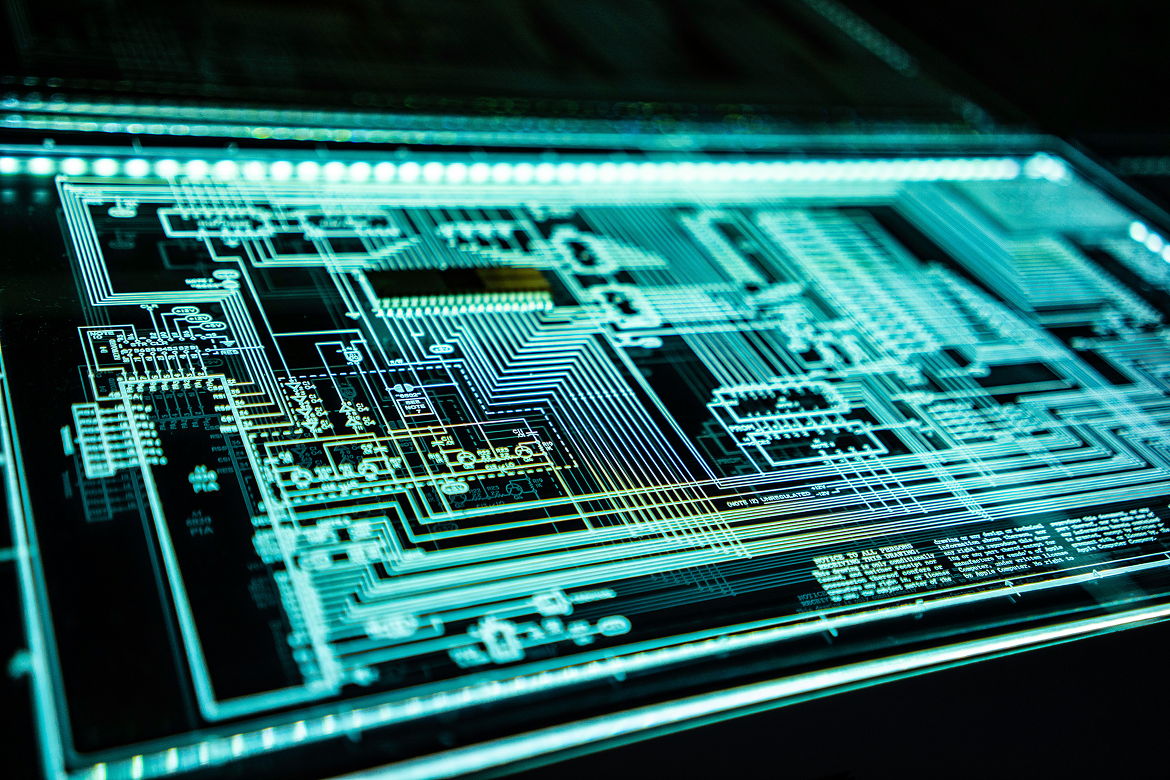

Security experts say the capabilities now emerging represent a fundamental change in the threat landscape. AI tools that were once limited to assisting developers are now approaching the level of skilled human hackers. This raises the risk that such systems could be used to target critical infrastructure, including energy grids, water systems, and financial networks. Industry leaders warn that the timeline for widespread availability of these capabilities may be measured in months rather than years.

A Shift Toward Controlled Deployment

The decision to restrict access highlights a growing tension between innovation and safety. AI companies are under pressure to advance model capabilities while preventing misuse. Limiting access to trusted partners allows developers to study risks and refine safeguards before broader release.

This approach resembles established practices in cybersecurity, where vulnerabilities are disclosed gradually to allow time for patches before public exposure. Some experts argue that staggered deployment of powerful AI models may become standard as capabilities continue to advance.

At the same time, there are limits to how much control companies can maintain. Researchers note that existing publicly available models are already capable of identifying certain vulnerabilities, suggesting that the underlying capabilities are spreading across the industry.

An Irreversible Turning Point

The move by OpenAI and Anthropic underscores a growing consensus that AI has crossed a critical threshold in cybersecurity. Once these capabilities exist, they cannot easily be contained. Even if leading companies restrict access, similar models are likely to emerge elsewhere.

For enterprises and governments, the implication is clear: defenses must evolve quickly. Organizations may need to adopt AI-driven security tools at scale to keep pace with increasingly automated threats.

While it remains unclear whether OpenAI will eventually release the model more broadly, the current strategy reflects a cautious approach to a rapidly changing risk environment. The balance between openness and control is likely to remain a defining issue as AI systems become more powerful and more widely deployed.