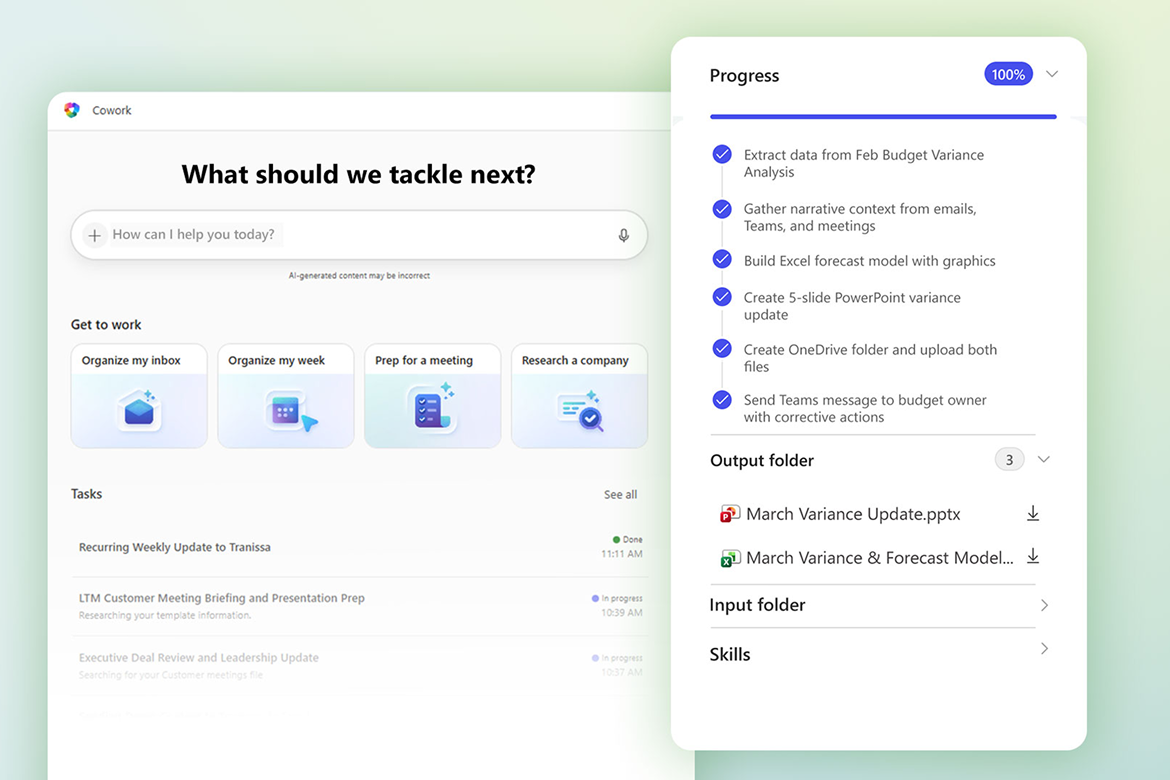

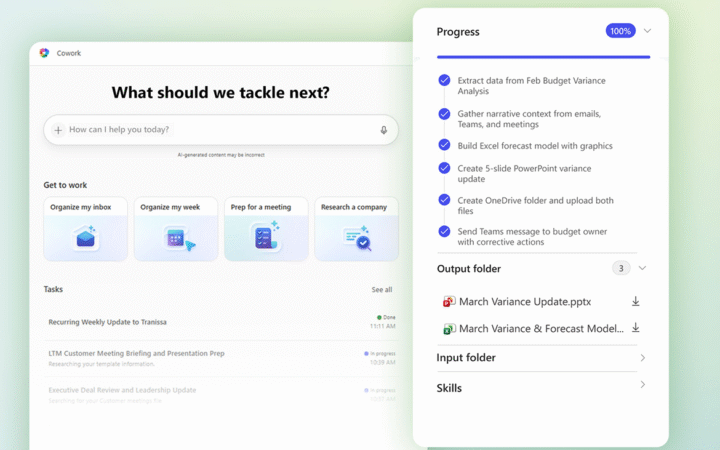

OpenAI has introduced plugin support for Codex, expanding its development tool into a broader platform for AI-driven workflows with integrations across popular workplace applications.

The new feature allows users to connect Codex with services including Slack, Notion, Figma, Gmail, and Google Drive. Through these integrations, Codex can access external data, automate tasks, and execute workflows that extend beyond traditional code generation.

The launch also marks the beginning of a plugin marketplace strategy, where reusable AI workflows can be distributed and adopted across teams with minimal setup.

Building an AI Workflow Ecosystem

Plugins in Codex are designed as bundled units that combine predefined workflows, integrations with external applications, and support for Model Context Protocol servers. This structure allows developers and teams to create reusable configurations tailored to specific tasks.

For example, Codex can be used to summarize Slack channels, manage documents in Google Drive, or generate and modify designs through Figma integrations. These capabilities position the tool as more than a coding assistant, enabling it to function as a general-purpose productivity layer across enterprise environments.

Previously, similar workflows required manual configuration and technical expertise. With the introduction of plugins, users can install and deploy these capabilities through a centralized directory, lowering the barrier to adoption.

The approach aligns with a broader shift in the AI sector toward agent-based systems that can execute multi-step tasks across different tools and services.

Competing in the AI Platform Race

The expansion of Codex into a plugin-enabled platform reflects increasing competition among AI providers to build extensible ecosystems. Rivals have already emphasized integrations and modular architectures, particularly for enterprise use cases.

By launching a plugin marketplace, OpenAI is aiming to create a network effect around Codex, where third-party developers can contribute tools and workflows that enhance the platform’s capabilities. This model mirrors strategies seen in cloud software and developer platforms, where ecosystems play a key role in driving adoption.

The inclusion of widely used services such as Slack, Notion, and Gmail highlights a focus on real-world productivity use cases. It also signals a move toward embedding AI more deeply into everyday workflows, rather than limiting it to isolated development tasks.

As organizations increasingly adopt AI agents to automate complex processes, tools like Codex are evolving to serve as coordination layers across software environments. The addition of plugins positions OpenAI to capture a larger share of this emerging market for AI-powered work platforms.