Nvidia has introduced Nemotron 3 Super, a new open AI model designed to support large-scale agentic AI systems. The model contains 120 billion parameters, with 12 billion active during inference, and is optimized for complex reasoning tasks across multi-agent workflows.

Nemotron 3 Super is designed to address performance and cost challenges that arise when organizations deploy multiple AI agents working together on complex tasks. These systems often require large volumes of context and repeated reasoning across multiple steps, increasing both computing costs and latency.

The model includes a context window of up to one million tokens, enabling AI agents to retain full workflow states during extended operations. This helps prevent “goal drift,” a problem where agents lose alignment with the original objective as conversations grow longer and more complex.

Nvidia said the model is capable of handling multi-step reasoning tasks with high accuracy and is intended for applications such as software development agents, enterprise automation systems, and scientific research tools.

Architecture and Performance Improvements

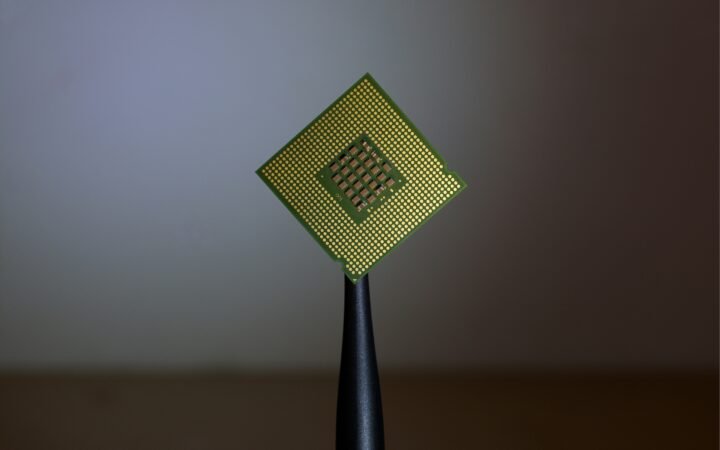

Nemotron 3 Super uses a hybrid mixture-of-experts architecture that activates only a subset of its parameters during inference. While the model contains 120 billion parameters in total, only 12 billion are active at any given time, improving efficiency while maintaining performance.

The system combines transformer layers with Mamba layers, which provide improved memory and compute efficiency. Nvidia said the design delivers up to five times higher throughput and double the accuracy compared with earlier Nemotron Super models.

The model also incorporates latent mixture-of-experts techniques that allow four specialized expert components to contribute to token generation at the cost of activating only one.

Another optimization is multi-token prediction, which enables the system to predict several words simultaneously. This technique can accelerate inference speeds by up to three times compared with standard token-by-token generation.

When deployed on Nvidia’s Blackwell platform, the model runs in NVFP4 precision, which reduces memory requirements and increases inference performance by up to four times compared with FP8 precision on Hopper GPUs.

Open Model and Enterprise Deployment

Nvidia is releasing Nemotron 3 Super with open weights under a permissive license, allowing developers to customize and deploy the model across workstations, data centers, or cloud platforms.

The company has also published training methodologies and datasets used to build the model, including more than 10 trillion tokens of synthetic and curated training data. Developers can further adapt the model using Nvidia’s NeMo platform for fine-tuning and reinforcement learning.

Several companies have already integrated Nemotron 3 Super into their systems. AI-native platforms such as Perplexity are using the model for search and agent orchestration, while developer tools including CodeRabbit, Factory, and Greptile are incorporating it into software engineering agents.

Enterprise software providers including Amdocs, Palantir, Cadence, Dassault Systèmes, and Siemens are also deploying the model to automate workflows in industries such as telecommunications, cybersecurity, and semiconductor design.

The model is available through multiple distribution channels including Nvidia’s build platform, Hugging Face, OpenRouter, and Perplexity. Cloud providers including Google Cloud, Oracle Cloud Infrastructure, and Nvidia cloud partners such as CoreWeave and Together AI are offering deployment support, with availability planned on Amazon Web Services and Microsoft Azure.

Nemotron 3 Super is packaged as an Nvidia NIM microservice, allowing organizations to deploy the model across on-premises systems and cloud environments as they scale multi-agent AI applications.