Meta has introduced a new set of updates aimed at improving how it identifies and protects younger users across its platforms, including Instagram and Facebook. The company is expanding its use of artificial intelligence to detect underage accounts and enforce age-based protections, while also rolling out Teen Account safeguards to more regions. The changes come as regulatory scrutiny and public concern over youth safety online continue to increase globally.

A key part of the update is the use of more advanced AI systems to identify users under 13, who are not permitted on Meta’s platforms. The company said it is now using visual analysis alongside text-based signals to assess whether an account belongs to a minor. This includes scanning posts, captions, and images for contextual clues such as school references or birthday celebrations. If an account is flagged, it may be deactivated unless the user verifies their age through official checks.

Meta emphasized that the visual analysis does not rely on facial recognition. Instead, it evaluates general characteristics such as physical features and contextual elements in photos and videos to estimate age. The company is also deploying AI tools to assist with reviewing reports of underage users, aiming to improve both speed and accuracy compared with human moderation alone.

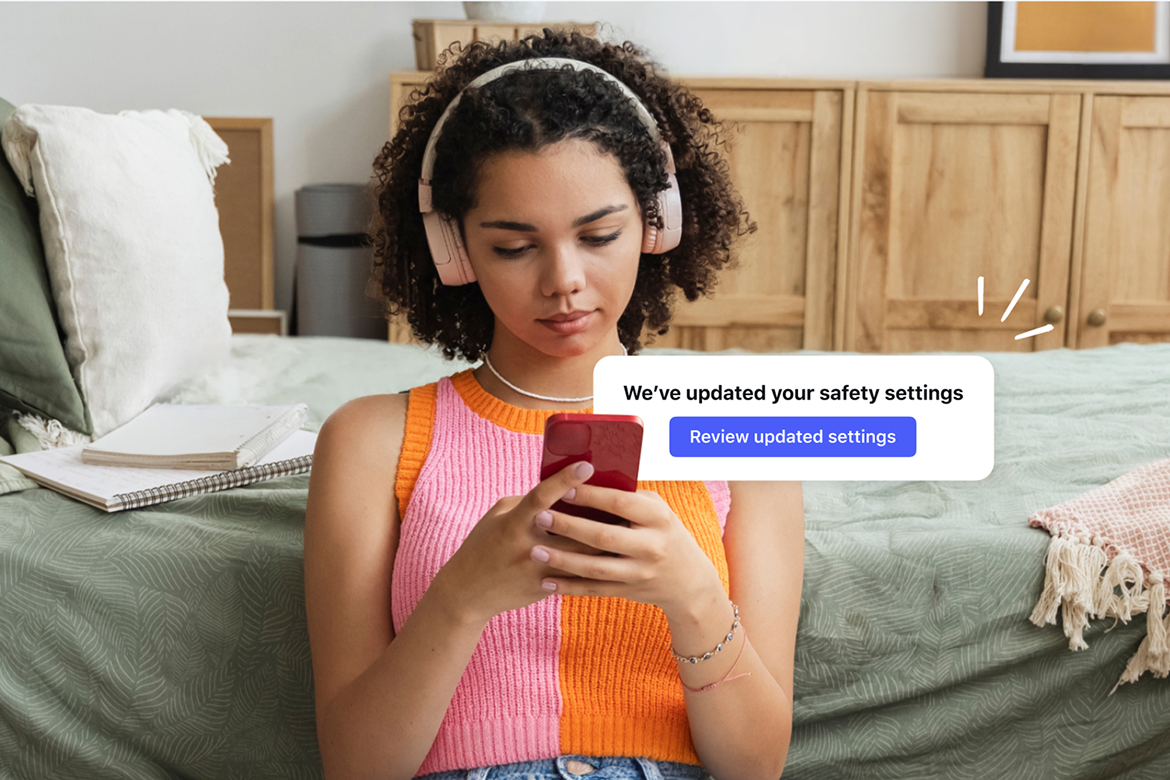

Alongside enforcement, Meta is expanding its system that automatically places suspected teens into restricted Teen Accounts. These accounts include built-in protections such as limits on who can contact users and what content they can see. After initial rollouts in the United States, Canada, the United Kingdom, and Australia, the company is extending these measures to 27 European Union countries and Brazil on Instagram. Facebook will also begin using the system in the US, with further expansion planned in the UK and EU.

Tighter Controls and Broader Coverage

The updates reflect a broader shift toward proactive age verification as platforms face increased pressure to protect younger users. By combining AI detection with automatic safeguards, Meta is reducing reliance on self-reported age, which has historically been unreliable.

For teens, this means more accounts will be placed into restricted environments by default, even if users attempt to bypass safeguards. Parents may also see more prompts and tools designed to encourage age transparency. For the wider industry, the rollout signals a move toward automated enforcement at scale, which competitors may need to match as expectations rise.

Meta’s call for app store-level age verification also points to a potential structural change in how age assurance is handled. A centralized system could simplify compliance and create more consistent protections across apps, though it would require cooperation from platform operators.

Policy Pressure and Platform Evolution

Meta’s latest measures build on years of investment in youth safety features, including Teen Accounts across Instagram, Facebook, and Messenger. These systems automatically apply stricter privacy settings and content limitations for users under 18.

The company has increasingly turned to AI to address moderation challenges, including detecting harmful content and identifying policy violations. Age detection remains one of the most complex problems, as users can misrepresent their identity and behavior varies widely across regions.

Similar approaches are emerging across the industry. OpenAI introduced age prediction tools within ChatGPT to adjust safety settings for younger users, while allowing adults to verify their age to access fewer restrictions. The parallel efforts highlight a growing consensus among major platforms that automated age assurance will play a central role in meeting regulatory demands and improving online safety for teens.