Google has expanded its Canvas feature within AI Mode in Search to all users in the United States using English. The feature introduces a dedicated workspace that allows users to create documents, build tools, and organize projects directly inside Google’s AI powered search interface.

Canvas functions as a dynamic side panel where users can draft content, develop interactive tools, and manage ongoing projects. The workspace integrates live information from the web and Google’s Knowledge Graph to populate projects with updated data.

The rollout marks another step in Google’s effort to transform search from a traditional query interface into a broader productivity environment powered by generative AI.

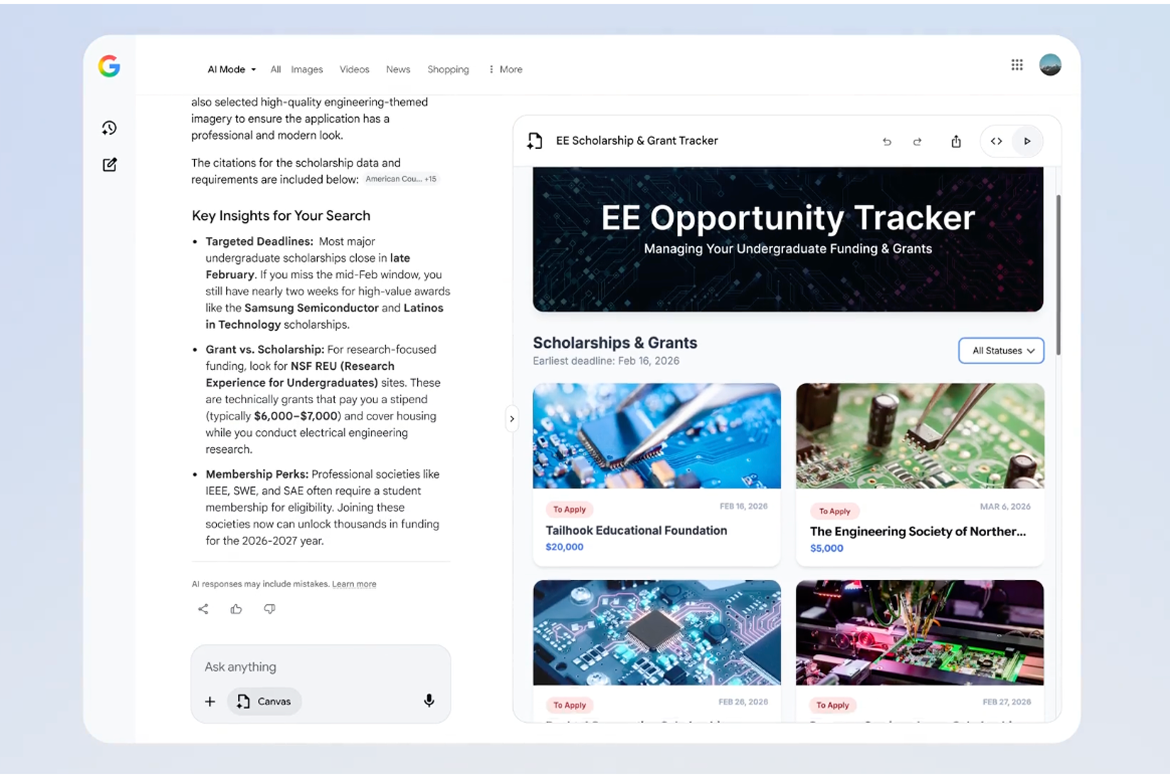

Users can access Canvas through the AI Mode tool menu and describe the project or tool they want to create. The system then generates a working prototype inside the Canvas panel, which can be edited and refined through conversational prompts.

Interactive Tools and Coding Capabilities

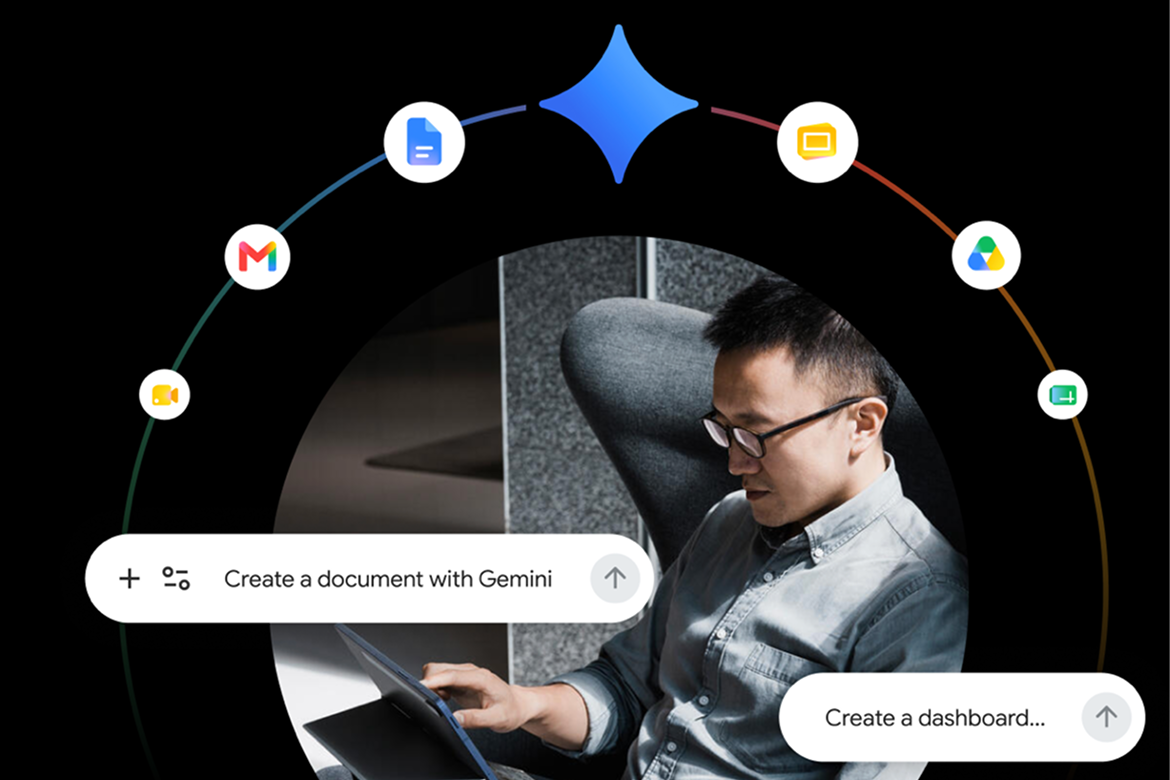

The updated Canvas environment includes expanded capabilities for both creative writing and coding tasks. Users can draft documents, create dashboards, or build lightweight applications without leaving the Search interface.

One example shared by Google involved an academic scholarship dashboard that aggregates application requirements, deadlines, and award amounts into a single interactive tool. Similar use cases could include study planners, travel itineraries, or data tracking dashboards.

For more advanced users, Canvas also allows access to the underlying code powering generated tools. Developers can view and modify the code directly, enabling customization or further refinement of generated applications.

Google said the feature was designed to support iterative development. After generating an initial prototype, users can test functionality and request changes through follow up prompts until the tool or document meets their needs.

AI Search as a Productivity Platform

The introduction of Canvas reflects a broader shift in how technology companies are positioning AI assisted search as a platform for creation rather than just information retrieval.

By integrating writing, coding, and project management capabilities into the search experience, Google is competing more directly with AI assistants that function as productivity tools.

The move also aligns with the industry trend toward embedding generative AI into everyday workflows. Rather than requiring separate applications for coding, note taking, or research, platforms like Canvas aim to consolidate those tasks within a single interface powered by AI models and real time web data.