Strong outlooks from ASML and TSMC are reinforcing a clear message across the industry. The AI infrastructure boom is far from slowing down.

Both companies raised their forecasts this week, pointing to sustained demand for advanced chips used in training and running large AI models. The signals suggest that major cloud providers are continuing to invest heavily in compute, even as questions grow about returns on those investments.

AI Spending Machine Keeps Running

Executives say demand is still being driven by hyperscale customers. Companies like Microsoft, Amazon, and Meta are expected to collectively spend more than $600 billion this year on data centers and AI infrastructure.

TSMC CEO C.C. Wei pointed to strong signals across the supply chain, noting that demand is not only coming from direct customers but also from their downstream clients. In practice, that means cloud providers racing to secure chips before competitors do.

This demand flows directly to chip designers such as Nvidia, AMD, and Broadcom, all of which rely heavily on TSMC’s manufacturing capacity.

The Bottleneck Problem

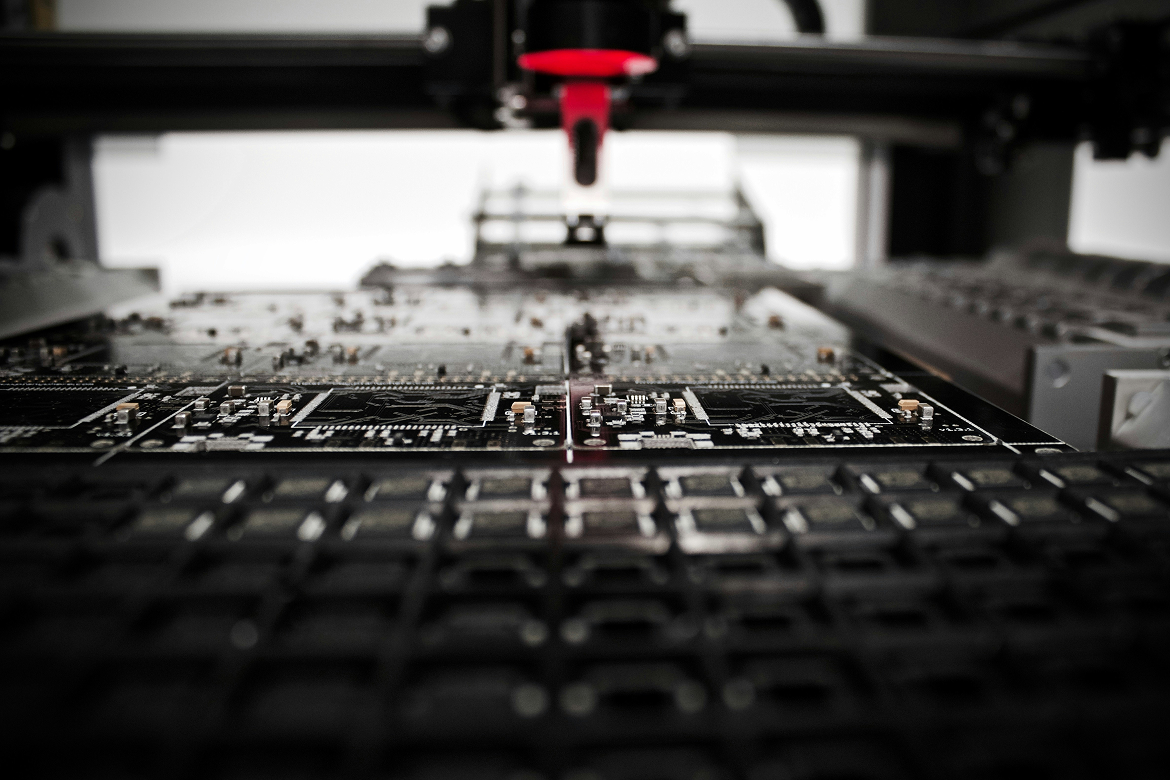

Despite strong demand, the industry faces a fundamental constraint. There are only a few companies capable of producing cutting-edge chips at scale.

ASML, which supplies the lithography machines required to manufacture advanced semiconductors, expects demand to exceed supply for the foreseeable future. That constraint is now affecting not just AI, but also smartphones and PCs.

TSMC echoed similar concerns, noting that capacity remains tight even as the company ramps up capital spending to expand production.

This dynamic has pushed companies toward long-term agreements to lock in manufacturing capacity, sometimes years in advance. Securing supply has become as critical as designing the chips themselves.

Shift Toward Advanced AI Chips

Another notable shift is where demand is concentrating. Increasingly, spending is moving toward high-performance processors used for inference, the stage where trained AI models generate real-world outputs.

This reflects the next phase of AI adoption. After an initial wave focused on training large models, companies are now scaling deployment across products and services, which requires massive inference capacity.

Boom With Questions Attached

Even as forecasts remain strong, investor pressure is building. Markets are increasingly focused on whether massive AI spending will translate into meaningful returns.

Some analysts warn that the current pace of investment could eventually face limits, especially if monetization lags behind infrastructure build-out.

For now, however, the signals from ASML and TSMC suggest the opposite. Demand is still accelerating, supply is constrained, and the race for AI compute is intensifying across the entire technology stack.