Anthropic has introduced Managed Agents, a new system architecture designed to support long-running AI tasks by separating core components of agent behavior.

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale.

It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days.

Now in public beta on the Claude Platform. pic.twitter.com/vHYfiC1G56

— Claude (@claudeai) April 8, 2026

The approach decouples what the company describes as the “brain” of an AI system from its execution environment and memory, allowing each layer to operate independently. The system is now available as part of Anthropic’s Claude platform and is aimed at developers building complex, multi-step AI workflows.

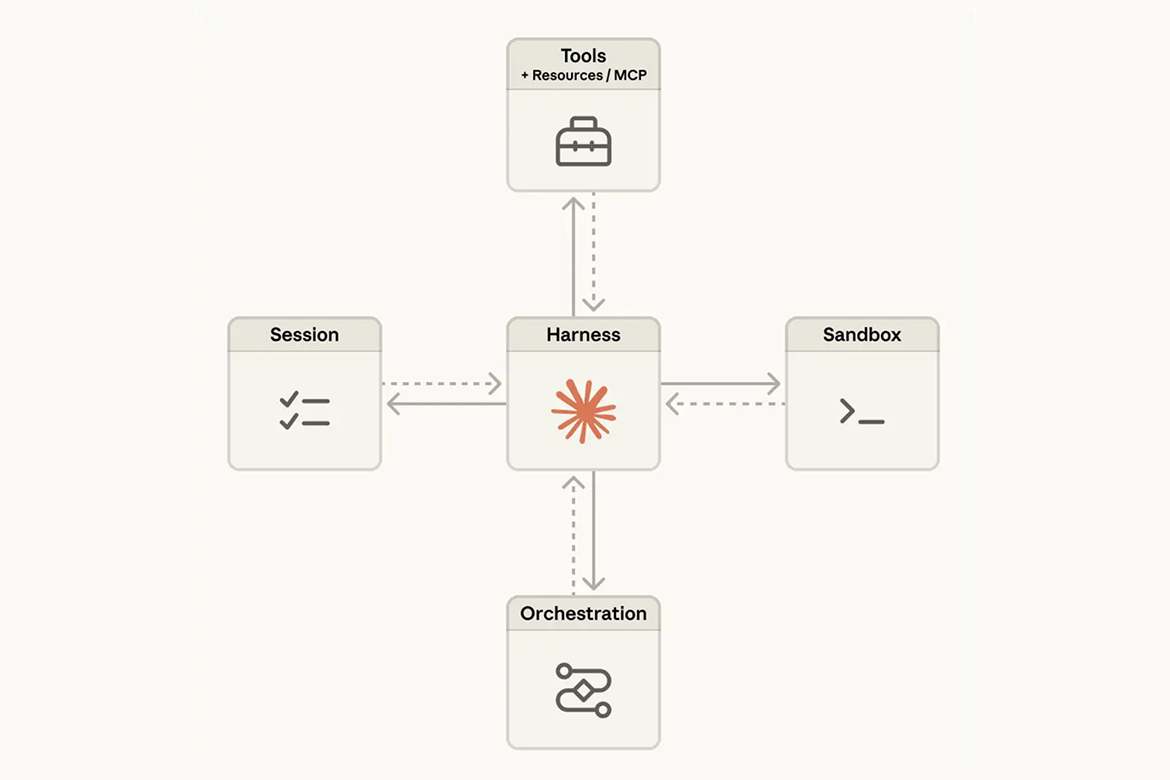

The architecture breaks AI agents into three main components: the session, which stores a durable log of events; the harness, which orchestrates model calls and tool usage; and the sandbox, where code execution and external actions take place. By separating these layers, Anthropic aims to avoid a common problem in AI systems where tightly coupled infrastructure becomes fragile as models evolve. Earlier designs placed all components in a single environment, making failures harder to diagnose and recover from.

One key motivation behind the redesign is the rapid improvement of AI models themselves. Anthropic noted that assumptions embedded in earlier systems, such as workarounds for model limitations, can quickly become outdated. For example, previous models required interventions to prevent premature task completion due to context limits, but newer models no longer exhibit the same behavior. Managed Agents is designed to remain stable even as such capabilities change.

Decoupling for Reliability and Scale

The new system treats execution environments as interchangeable resources rather than fixed components. If a sandbox fails, the system can spin up a new one without disrupting the overall task. Similarly, the orchestration layer can restart independently by reconnecting to the session log, which acts as a persistent source of truth. This design reduces downtime and simplifies debugging, particularly for long-running or complex processes.

The decoupling also improves security. In earlier setups, sensitive credentials could be exposed within the same environment where AI-generated code was executed. Managed Agents separates these concerns, storing credentials in secure vaults and limiting direct access from execution environments. This reduces the risk of misuse, including potential prompt injection attacks.

Toward Flexible AI Infrastructure

Anthropic’s design draws inspiration from operating systems, which abstract hardware into stable interfaces that remain consistent even as underlying technology changes. Similarly, Managed Agents introduces standardized interfaces that allow different components to evolve independently.

This flexibility extends to performance. By separating reasoning from execution, the system can start generating responses without waiting for full environment setup, reducing latency. Anthropic said this approach has significantly improved time-to-first-response metrics in internal testing.

The system also supports more complex configurations, including multiple AI agents working in parallel and interacting with multiple execution environments. This allows developers to build more sophisticated workflows without being constrained by a single runtime environment.