OpenAI Limits Access to Cybersecurity AI Model Over Misuse Risks

OpenAI plans to restrict access to a powerful new cybersecurity-focused AI model, reflecting growing concern over misuse as capabilities approach real-world attack potential.

AI security is now a core part of cybersecurity. In AIstify’s AI Security section, we cover how models are attacked, defended, and operated safely – from prompt injection and data leakage to supply-chain risk and model misuse. We track vendor tooling, red-teaming, evaluations, and the policies shaping secure deployment across cloud and edge. Whether you are defending systems or building them, this hub keeps you current on threats, mitigations, and the standards emerging around trustworthy AI.

OpenAI plans to restrict access to a powerful new cybersecurity-focused AI model, reflecting growing concern over misuse as capabilities approach real-world attack potential.

Google is adding new mental health features to Gemini, including crisis detection tools and direct hotline access. The company is also committing $30 million to expand global support services.

Anthropic mistakenly triggered the removal of thousands of GitHub repositories while attempting to take down leaked source code. The company has since reversed most of the takedown actions.

Anthropic has again exposed the source code of its Claude Code tool due to a packaging error, raising concerns over software release practices.

Anthropic has launched the Anthropic Institute to study the societal, economic, and governance challenges posed by advanced AI systems. The initiative will combine research from engineers, economists, and social scientists.

OpenAI is acquiring AI security platform Promptfoo to enhance testing, safety, and governance tools for enterprise AI systems. The technology will be integrated into OpenAI’s Frontier platform for AI coworkers.

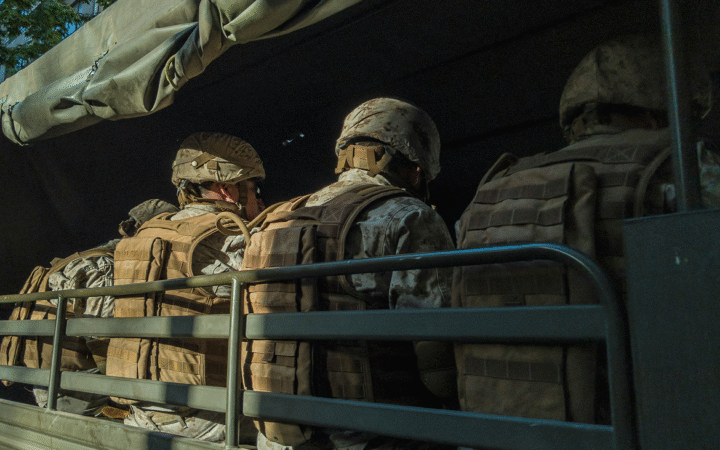

Despite President Trump’s directive to cease federal use of Anthropic’s Claude AI, U.S. military forces reportedly employed the model for intelligence, target selection, and battlefield simulations in airstrikes on Iran.

Anthropic unveils a revised Responsible Scaling Policy with a Frontier Safety Roadmap, regular Risk Reports, and clearer separation between company commitments and industry recommendations.