AI Agents Can Now Hire Humans to Finish Tasks They Cannot

A new platform called Rent a Human allows AI agents to outsource tasks to real people when automation falls short, highlighting an unusual hybrid model of human-in-the-loop labor.

AI security is now a core part of cybersecurity. In AIstify’s AI Security section, we cover how models are attacked, defended, and operated safely – from prompt injection and data leakage to supply-chain risk and model misuse. We track vendor tooling, red-teaming, evaluations, and the policies shaping secure deployment across cloud and edge. Whether you are defending systems or building them, this hub keeps you current on threats, mitigations, and the standards emerging around trustworthy AI.

A new platform called Rent a Human allows AI agents to outsource tasks to real people when automation falls short, highlighting an unusual hybrid model of human-in-the-loop labor.

Moltbook, built on OpenClaw agentic AI, lets bots interact and form communities. Experts warn of security risks and governance challenges with AI-driven social networks.

A coalition of nonprofits urges federal suspension of xAI’s Grok AI, highlighting nonconsensual image generation, bias, and potential national security threats.

A new assessment by Common Sense Media finds xAI’s Grok chatbot exposes minors to sexual, violent, and unsafe content, with weak age verification and ineffective safety controls.

OpenAI’s latest ChatGPT model has cited Elon Musk-backed Grokipedia across multiple topics, prompting concerns among researchers about how low-credibility sources can influence AI-generated answers.

Anthropic publishes Claude’s constitution, a detailed framework guiding AI behavior, ethics, safety, and helpfulness, available under Creative Commons for transparency and research.

OpenAI now uses age prediction to adjust ChatGPT’s safety settings for teens. Users 18 and older can verify their age to disable extra restrictions.

U.S. senators are demanding detailed explanations from major tech platforms on how they prevent and monetize AI-generated sexual deepfakes. The inquiry follows renewed scrutiny of generative AI tools and their safeguards.

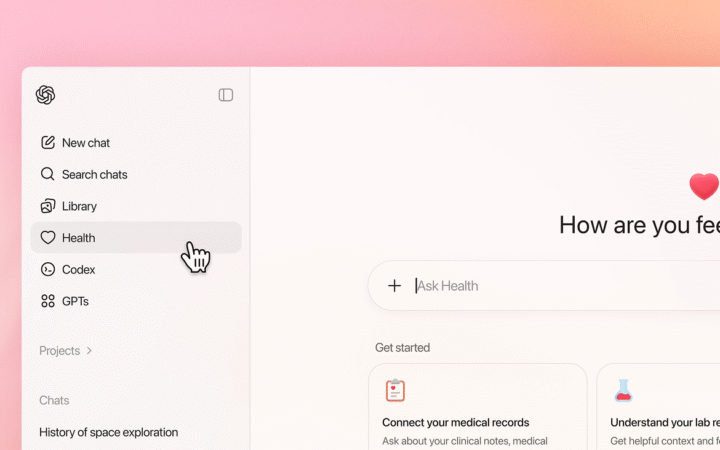

OpenAI introduced ChatGPT Health, a dedicated experience that securely integrates personal health data with AI, helping users navigate wellness, lab results, and appointments with added privacy protections.

Lenovo introduced Qira, a system-level AI spanning PCs, tablets, smartphones, and wearables. The assistant offers context-aware, cross-device intelligence for writing, collaboration, and workflow management.