Google has introduced TurboQuant, a new set of quantization algorithms designed to significantly improve the efficiency of artificial intelligence systems by reducing memory usage without sacrificing performance.

The announcement highlights a growing focus on optimizing the infrastructure behind large language models and vector search engines. These systems rely on high-dimensional vectors to represent complex data such as language, images, and user intent. While powerful, these vectors consume large amounts of memory, particularly in the key-value (KV) cache used during inference.

TurboQuant addresses this bottleneck by compressing vector data more effectively than traditional methods. Existing approaches often introduce additional memory overhead through stored quantization parameters. Google’s method minimizes this overhead, enabling higher compression rates with minimal loss in accuracy.

New Algorithms for Scalable AI

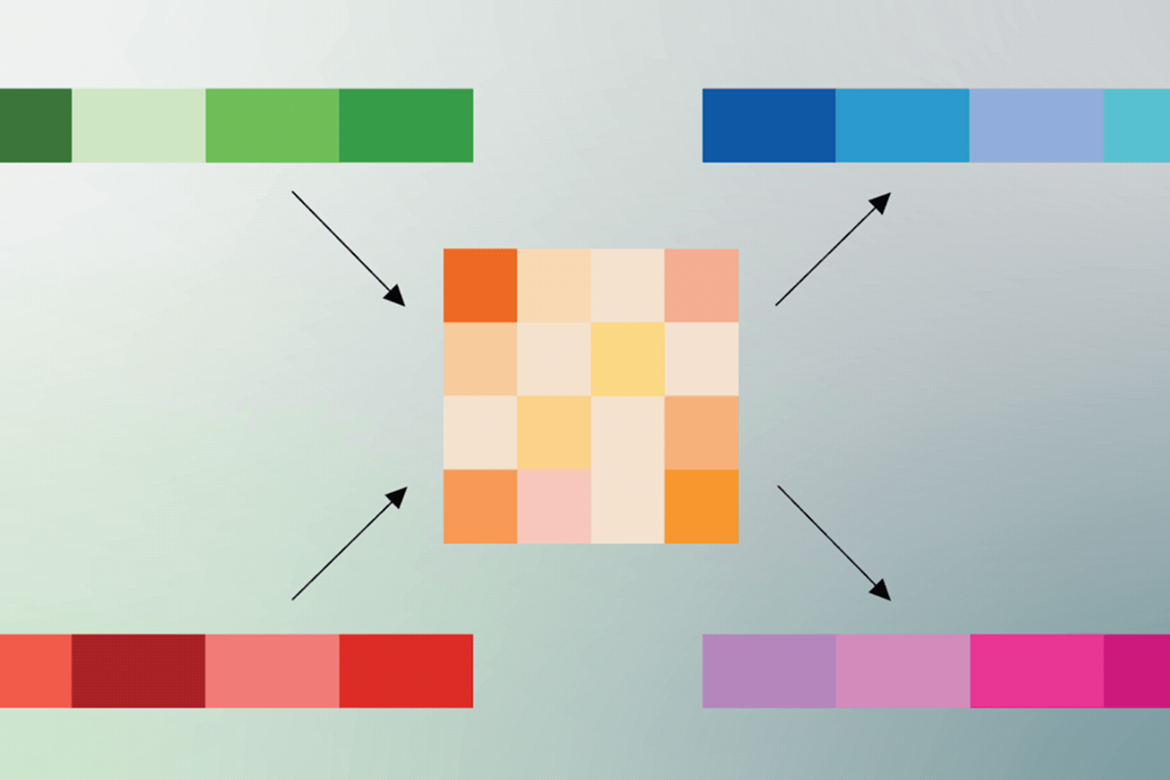

TurboQuant builds on two supporting techniques: Quantized Johnson-Lindenstrauss (QJL) and PolarQuant.

PolarQuant restructures vector data into polar coordinates, simplifying its geometry and enabling more efficient compression. This reduces computational overhead and eliminates the need for certain normalization steps.

QJL applies a mathematical transformation that reduces data to a single-bit representation while preserving the relationships between vectors. It acts as a lightweight correction layer, improving accuracy after compression.

Together, these methods allow TurboQuant to compress KV cache data to as little as three bits per value. The approach does not require retraining or fine-tuning models, making it easier to deploy across existing systems.

Performance Gains and Use Cases

In benchmark testing across tasks such as question answering, summarization, and code generation, TurboQuant maintained performance while significantly reducing memory usage.

Google reported up to a sixfold reduction in KV cache size and speed improvements of up to eight times in attention computation on modern GPU hardware. The method also demonstrated strong performance in vector search, achieving high recall accuracy compared to existing techniques.

These improvements are particularly relevant for large-scale AI deployments, where memory constraints and compute costs are major limiting factors.

Implications for AI Infrastructure

The release underscores the importance of foundational optimizations as AI systems scale. Efficient compression techniques can lower hardware requirements, reduce energy consumption, and improve response times.

TurboQuant is expected to play a role in applications such as semantic search, recommendation systems, and real-time AI services. It may also support large-scale platforms that rely on fast vector retrieval.

Google plans to present the research at ICLR 2026, with related work including PolarQuant and QJL scheduled for academic conferences. The methods are supported by theoretical analysis, suggesting they approach optimal efficiency limits.

As AI adoption accelerates, innovations in core infrastructure such as compression are becoming increasingly critical to sustaining performance and scalability across systems.