OpenAI has announced it will discontinue Sora, its generative AI video application, ending one of the company’s most high-profile consumer products and halting a major partnership with Disney.

The company confirmed the decision in a statement, thanking users and creators while promising to share further details on timelines for shutting down the app and its API. OpenAI did not provide a reason for the move.

Sora, launched publicly in late 2024 with a standalone app released in 2025, enabled users to generate realistic videos from text prompts. A second-generation version introduced improved physics and audio capabilities, attracting widespread attention but also scrutiny from the entertainment industry.

Partnership Collapse and Legal Pressures

The shutdown effectively ends OpenAI’s agreement with Disney, which had planned to integrate licensed characters from franchises such as Marvel, Pixar, and Star Wars into Sora-generated content. The deal also included a potential $1 billion investment by Disney in OpenAI.

Disney confirmed that it will no longer proceed with the partnership, stating it respects OpenAI’s decision to exit the video generation space and shift priorities. The agreement had aimed to create “fan-inspired” videos and distribute curated content through Disney+.

Sora’s development had already drawn concern from media companies and industry groups. Critics pointed to the model’s opt-out system for copyrighted material, which required rights holders to actively request exclusion from training data.

Organizations representing content creators, including Japanese and U.S. studios, raised objections and issued legal challenges against AI companies over alleged unauthorized use of intellectual property. These concerns extended beyond OpenAI to other platforms offering generative video tools.

Strategic Shift Away From Video AI

The closure of Sora reflects a broader strategic shift within OpenAI as it prioritizes core AI capabilities such as text, coding, and reasoning systems. These areas are seen as more scalable and commercially viable, particularly in enterprise markets.

Generative video remains one of the most resource-intensive applications in AI, requiring significant computational power to produce high-quality outputs. Industry estimates have suggested that operating such systems can carry substantial ongoing costs, adding pressure to justify long-term investment.

At the same time, competition in the AI sector is intensifying. Rival companies have focused on specialized domains, particularly text-based models, where demand and monetization are more established.

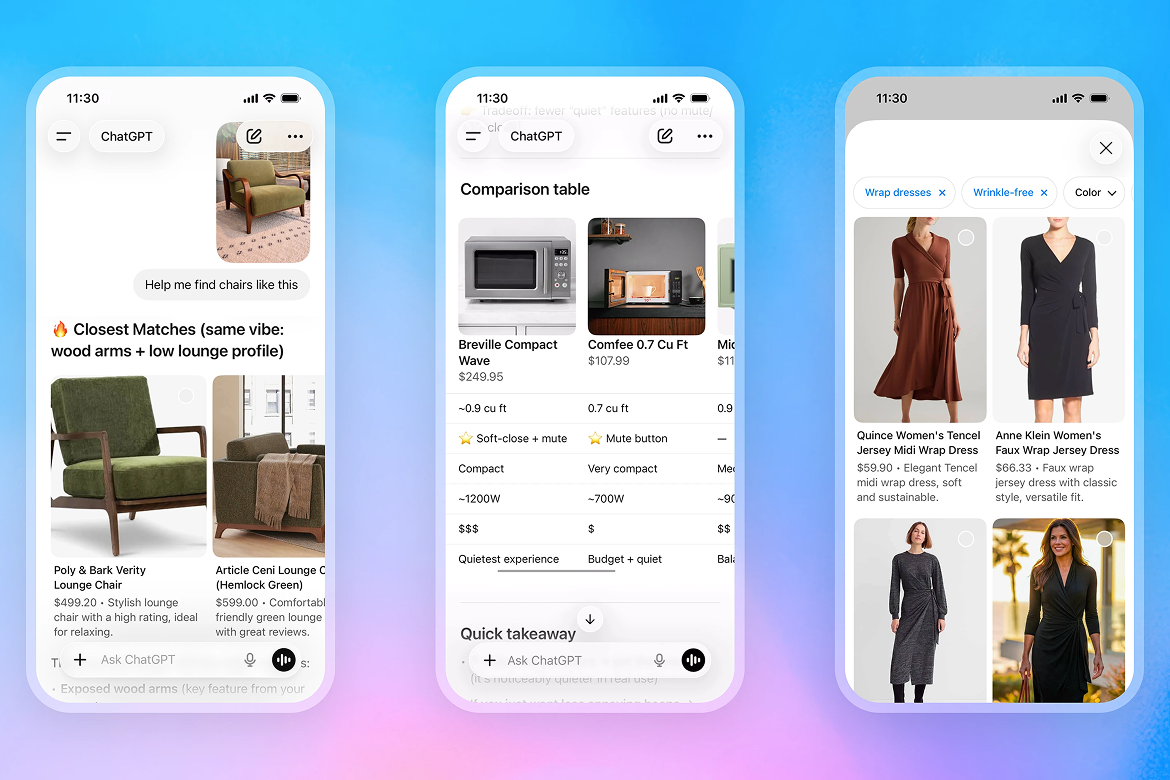

The shutdown also means that ChatGPT will no longer support video generation from text prompts, further signaling OpenAI’s retreat from this category.

Despite exiting the space, generative video remains active across other platforms, though it continues to face legal scrutiny from major studios. Companies including Google, Meta, and ByteDance have all encountered challenges related to copyright enforcement and content ownership.