Salesforce is positioning Slack as a central interface for enterprise AI with a major expansion of Slackbot, transforming it from a personal assistant into a collaborative, organization-wide AI teammate. The update introduces more than 30 new capabilities designed to connect data, applications, and workflows into a unified conversational experience.

The move reflects a broader shift in enterprise AI adoption. While many organizations have deployed multiple AI tools across departments, Salesforce argues that fragmentation limits their effectiveness. Slackbot aims to address this by acting as a shared intelligence layer that connects systems and delivers actionable insights directly within team workflows.

Slackbot operates inside Slack’s existing environment, leveraging access to conversations, files, and organizational context. It inherits existing permissions and governance settings, allowing it to interact across enterprise systems without requiring additional configuration. This design reduces friction in adoption while maintaining compliance controls.

One of the key additions is meeting intelligence. Slackbot can now transcribe meetings, summarize discussions, and extract action items. It can also trigger follow-up actions in connected systems such as customer relationship management tools, reducing the need for manual updates after meetings.

Integration Across Enterprise Systems

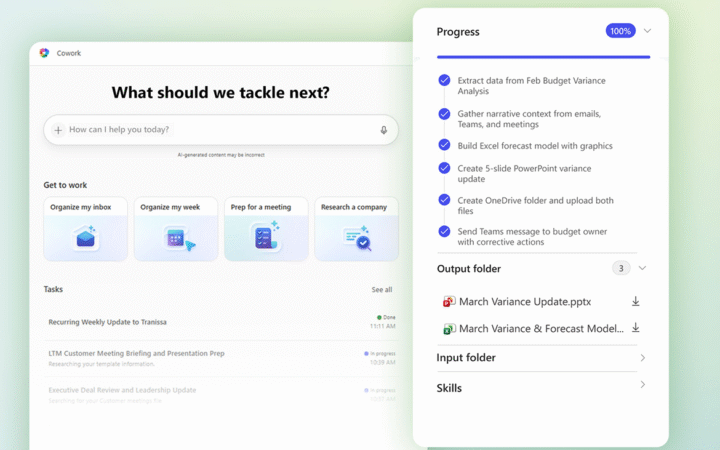

A central feature of the update is Slackbot’s ability to orchestrate workflows across multiple enterprise tools. Through a new model context protocol client, Slackbot can route tasks to various AI agents and applications, including systems used for sales, customer service, and IT operations. Employees can issue requests in natural language without needing to know which system executes the task.

Salesforce is also introducing reusable AI “skills,” which allow teams to standardize recurring workflows. These skills define inputs, steps, and outputs for specific tasks, enabling consistent execution across teams. Slackbot can automatically recognize when a task matches a predefined skill and apply it without user intervention.

For smaller businesses, Salesforce has embedded customer relationship management capabilities directly into Slackbot. The system can automatically capture customer interactions from conversations, update records, and track deals without requiring a separate CRM interface. For larger enterprises, Slackbot serves as a conversational layer over Salesforce’s Customer 360 platform, enabling users to update opportunities, manage cases, and trigger workflows without leaving Slack.

Slackbot also extends to desktop-level interactions, allowing users to act on content across applications while maintaining context from Slack and connected systems. This reduces the need to switch between tools and manually transfer information.

Salesforce reports strong early adoption, with Slackbot becoming one of the fastest-growing features in its product history. Internal data suggests that employees using the tool can save significant time on routine tasks, reflecting growing demand for AI systems that integrate directly into existing workflows.

The expansion underscores Salesforce’s broader strategy to position Slack as the operating system for work, where human collaboration and AI-driven automation converge in a single interface.