OpenAI is rolling out a redesigned shopping experience in ChatGPT, shifting its strategy toward product discovery after scaling back its earlier Instant Checkout feature.

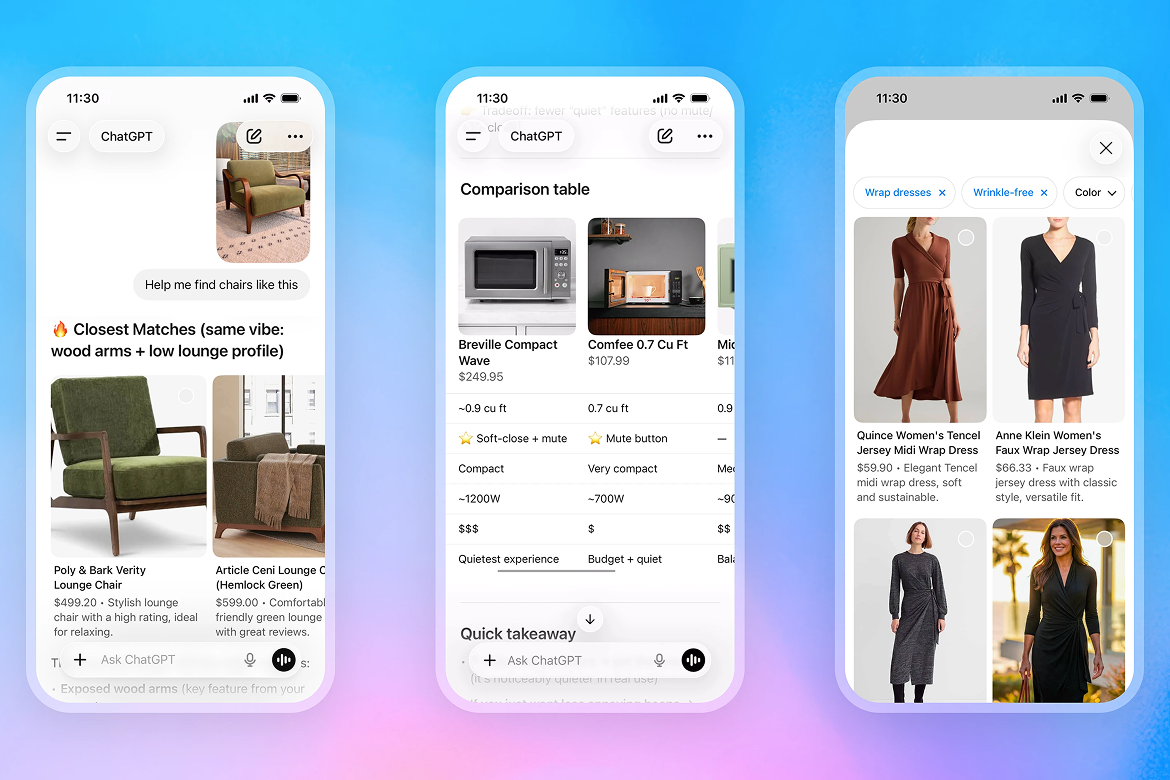

The update allows users to search for products by describing what they need or uploading images for reference. ChatGPT then generates visual results that can be compared side by side, with details such as pricing, features, and reviews integrated into the interface.

OpenAI said the system has been improved in terms of speed, relevance, and product coverage, enabling more accurate and up-to-date results. The goal is to simplify a process that often requires users to navigate multiple websites and sources before making a decision.

Pivot Away From In-Chat Transactions

The redesign follows OpenAI’s decision to move away from Instant Checkout, a feature launched last year that enabled users to complete purchases directly within ChatGPT. The company initially positioned the tool as a key step toward AI-driven commerce.

However, the feature faced challenges. Analysts noted difficulties in onboarding merchants, maintaining accurate product data, and supporting common e-commerce functions such as multi-item carts and loyalty integrations.

OpenAI acknowledged these limitations, stating that the checkout model did not provide the flexibility required for a broad retail ecosystem. Instead, the company is now focusing on helping users discover products while allowing merchants to retain control over transactions.

Under the new approach, purchases are completed through external merchant platforms, often via in-app browsers or dedicated integrations.

Expanding Merchant Ecosystem

The updated experience is supported by deeper integration with retailers, who can now provide product feeds and promotional data directly to ChatGPT. This ensures their offerings are fully represented in search results.

Major brands including Target, Sephora, and Nordstrom have already adopted the new system. Additional integrations allow companies to build custom applications within ChatGPT, giving them more control over the user experience and transaction flow.

Walmart has introduced a dedicated in-ChatGPT shopping interface that supports account linking, loyalty programs, and payments. Meanwhile, Shopify is expanding its role by enabling merchants to connect storefronts to its catalog and complete purchases through embedded browsing experiences.

Shopify is also launching a new service called Agentic Plan, designed to help merchants without existing storefronts surface their products across AI platforms, including ChatGPT and Google Gemini.

The shift reflects a broader trend toward “agentic commerce,” where AI systems assist users in navigating complex purchasing decisions rather than handling transactions directly.

OpenAI’s updated strategy positions ChatGPT as a discovery layer within the retail ecosystem, focusing on intent generation and product comparison. As AI becomes more integrated into shopping workflows, the balance between platform control and merchant autonomy is likely to remain a key area of development.