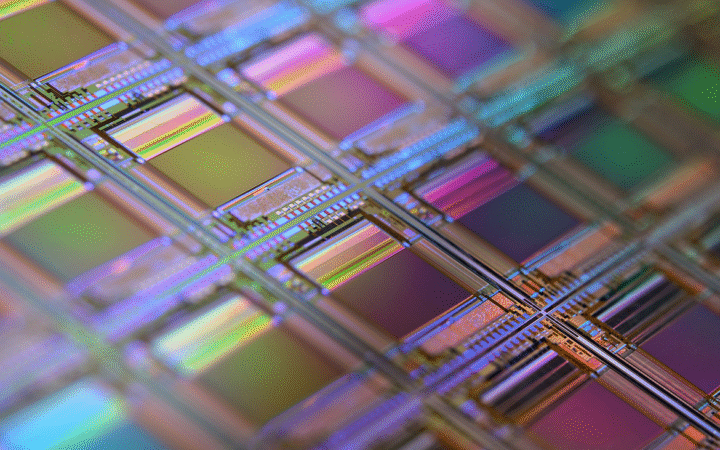

Intel said Tuesday it will participate in Elon Musk’s “Terafab” initiative, a project focused on expanding semiconductor manufacturing and generating massive computing power for artificial intelligence and robotics. While the company did not disclose specific responsibilities, it confirmed the partnership in a post on X, highlighting its role in designing, fabricating, and packaging high-performance chips at scale. Intel shares rose about 2% following the announcement.

Intel is proud to join the Terafab project with @SpaceX, @xAI, and @Tesla to help refactor silicon fab technology.

Our ability to design, fabricate, and package ultra-high-performance chips at scale will help accelerate Terafab’s aim to produce 1 TW/year of compute to power… pic.twitter.com/2vUmXn0YhH

— Intel (@intel) April 7, 2026

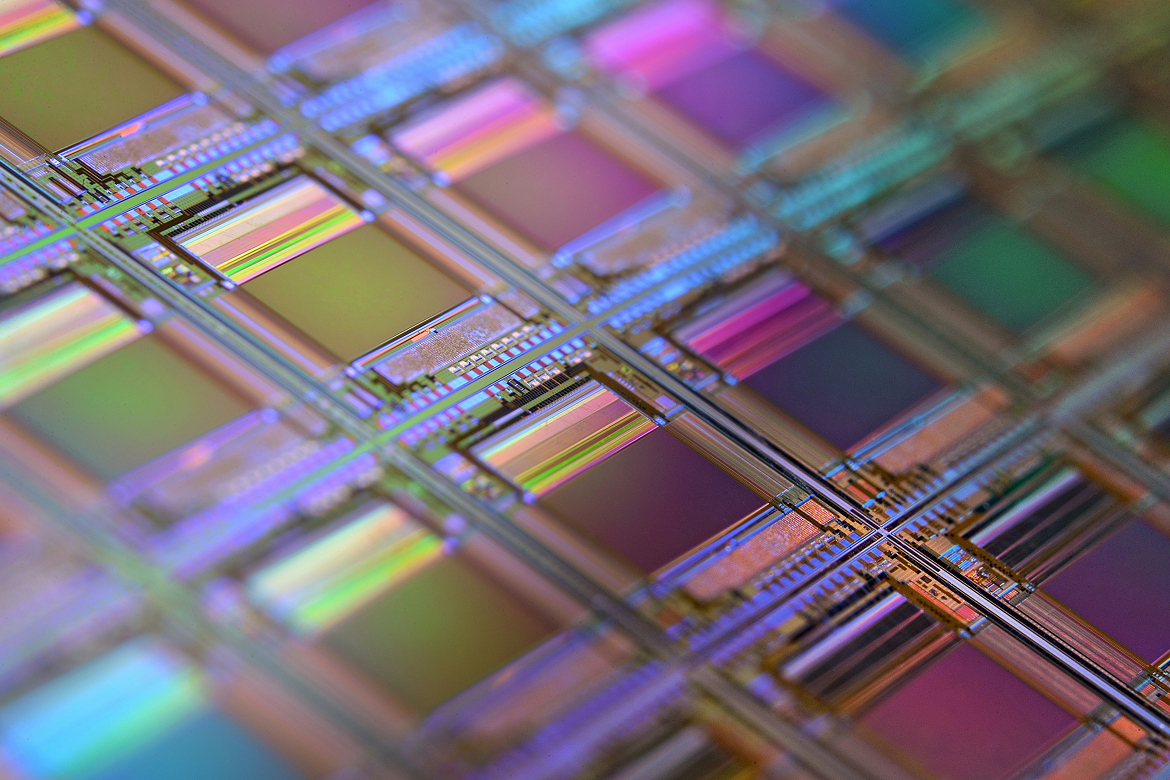

The Terafab project brings together several of Musk’s companies, including SpaceX, xAI, and Tesla, in an effort to rethink how advanced chips are produced. The initiative aims to deliver as much as 1 terawatt per year of compute capacity, a scale that reflects the rapidly increasing demands of AI systems. Intel’s contribution appears centered on its core strength in semiconductor manufacturing, particularly its ability to integrate chip design, fabrication, and advanced packaging technologies.

Although details remain limited, Intel’s involvement signals a potential shift in how large-scale AI infrastructure is developed. The company recently hosted Musk and xAI team members at its headquarters, suggesting early-stage collaboration and alignment. A photo shared by Intel showed Musk alongside CEO Lip-Bu Tan, reinforcing the strategic nature of the partnership.

Terafab’s ambition is notable even within the context of today’s AI boom. Producing 1 terawatt of compute annually would require vast manufacturing capacity and energy resources, far exceeding current deployments by most AI firms. The project appears designed to support Musk’s expanding ecosystem, including AI models developed by xAI, autonomous systems at Tesla, and data-intensive operations at SpaceX.

A New Model for AI Infrastructure

The collaboration reflects a broader trend toward tighter integration between chipmakers and AI developers. Instead of relying solely on external suppliers, companies are increasingly forming partnerships to secure dedicated compute capacity and optimize hardware for specific workloads. Intel’s manufacturing capabilities could complement Musk’s vertically integrated approach across hardware, software, and data.

At the same time, the move positions Intel more directly in competition with other semiconductor leaders benefiting from the AI surge. Nvidia has dominated the market for AI chips, while companies like AMD and Broadcom are expanding their roles through custom silicon and infrastructure partnerships. By aligning with Terafab, Intel may be seeking to strengthen its relevance in next-generation AI systems.

Scaling Beyond Traditional Limits

The Terafab initiative also highlights the industrial scale that AI development is reaching. Meeting the project’s compute targets would require advances not only in chip design but also in fabrication processes, supply chains, and energy efficiency. Intel emphasized that its ability to produce and package chips at scale will be central to achieving these goals.

For Musk’s companies, the project could provide greater control over critical infrastructure, reducing reliance on third-party suppliers and enabling faster iteration of AI systems. For the semiconductor industry, it points to a future where partnerships between chipmakers and AI firms become essential to meeting the growing demand for compute.