SoftBank Secures $40B Loan to Expand OpenAI Investment

SoftBank has secured a $40 billion bridge loan to deepen its investment in OpenAI and accelerate its broader AI strategy.

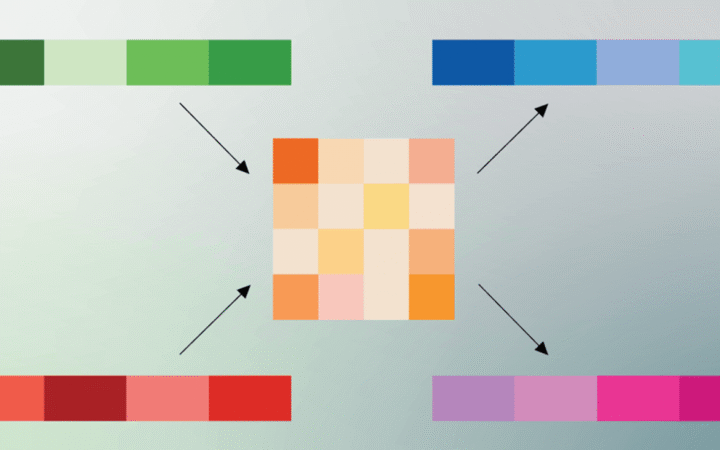

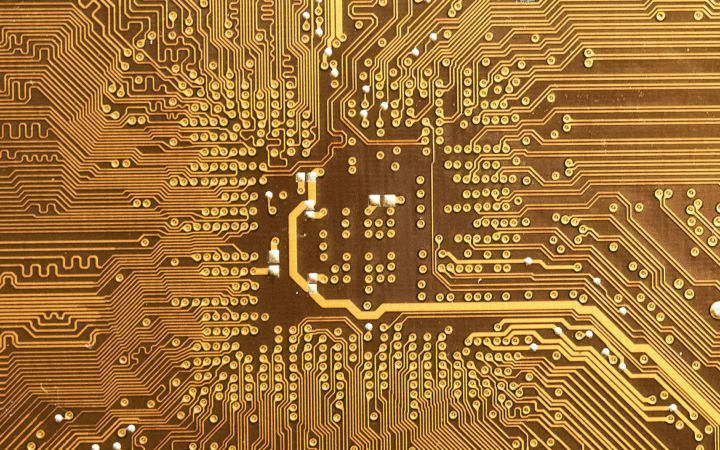

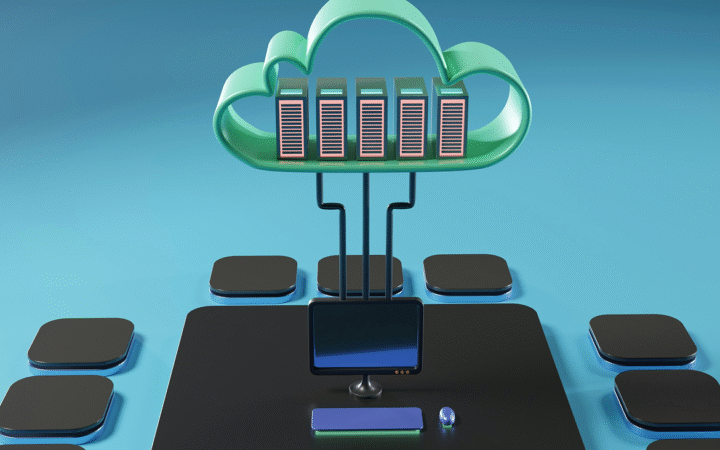

AI infrastructure refers to the combination of hardware, software, and cloud systems that provide the computing foundation needed to build, train, and deploy artificial intelligence models. It includes powerful GPUs, TPUs, data storage, networking, and specialized frameworks optimized for large-scale machine learning. Modern AI infrastructure supports the full lifecycle of model development — from data preprocessing and training to deployment and monitoring. Cloud providers such as Google, AWS, and Microsoft Azure have built robust AI infrastructure platforms that enable organizations to scale workloads efficiently and securely. As AI systems grow more complex, scalable infrastructure has become a strategic asset, powering breakthroughs in generative AI, automation, and enterprise applications.

SoftBank has secured a $40 billion bridge loan to deepen its investment in OpenAI and accelerate its broader AI strategy.

Google has introduced TurboQuant, a new compression algorithm that reduces memory usage in AI systems while maintaining accuracy, improving performance in large models and search.

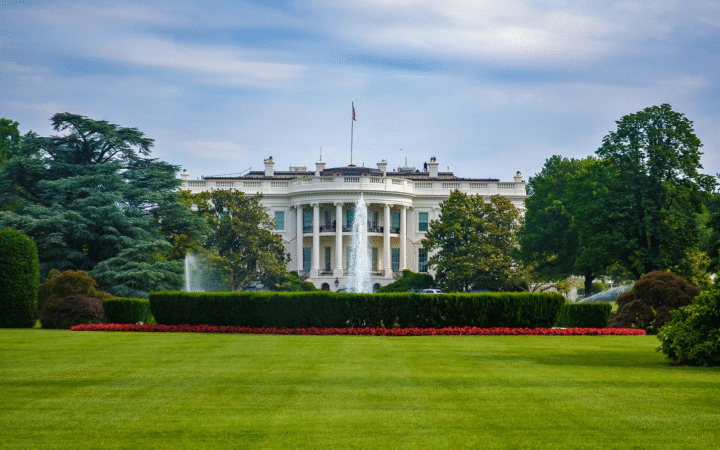

President Donald Trump has appointed top tech executives, including leaders from Meta, Nvidia, and Oracle, to a council shaping U.S. AI policy and strategy.

Alibaba has unveiled a new CPU designed for AI agents, focusing on inference and customizable workloads. The chip reflects China’s push to build domestic AI infrastructure.

Elon Musk announced plans for Terafab, a dual chip factory project by Tesla and SpaceX to produce AI chips for vehicles, robots, and space-based data centers.

Surging investment in AI data centers is fueling demand for skilled trade workers, creating labor shortages and rising wages. The trend highlights the physical infrastructure behind AI growth.

Nvidia CEO Jensen Huang said demand for Blackwell and Vera Rubin systems could reach $1 trillion by 2027, as the company unveiled new chips, racks, and AI infrastructure at GTC.

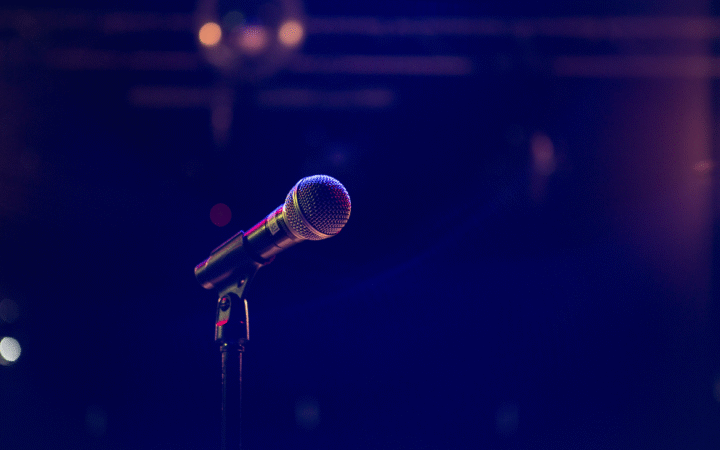

Nvidia CEO Jensen Huang will deliver the keynote at the GTC 2026 conference, where investors expect new AI product announcements and demand outlook updates.

Nebius has signed a long-term AI infrastructure agreement with Meta worth up to $27 billion, providing large-scale compute capacity powered by Nvidia’s Vera Rubin platform.

Amazon and Cerebras have partnered to combine their AI chips in a new AWS service designed to accelerate inference for chatbots, coding tools, and other generative AI applications.