Uber is expanding its use of custom artificial intelligence chips from Amazon to accelerate computing performance and support growing AI workloads. The move builds on the companies’ existing cloud partnership through Amazon Web Services (AWS).

Uber will deploy AWS Graviton processors to improve general computing efficiency and Trainium chips to train AI models that power its platform. These models are used to optimize ride-matching algorithms, enhance delivery logistics, and personalize user experiences across its applications.

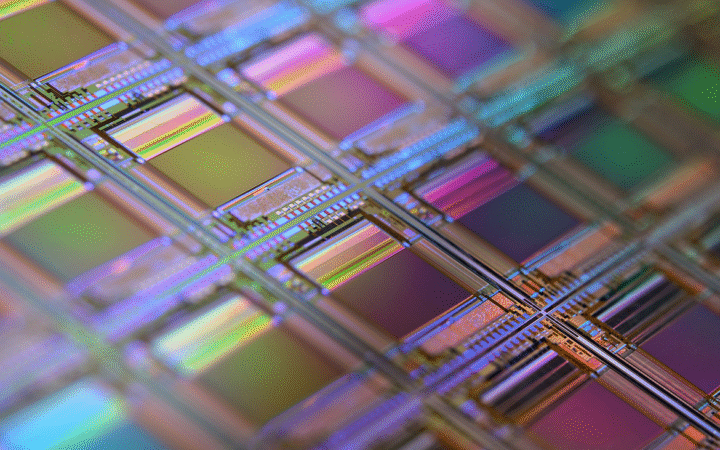

The adoption of specialized chips reflects Uber’s efforts to scale its digital infrastructure as demand for real-time services increases. Faster processing and more efficient model training are expected to improve system responsiveness and reduce operational costs.

For Amazon, the deal highlights its strategy to promote in-house silicon as an alternative to traditional GPU-based infrastructure. AWS has been positioning its custom chips as cost-effective solutions for both AI training and inference, targeting enterprise customers seeking performance and scalability.

The partnership underscores a broader industry shift toward vertical integration in AI infrastructure, where companies combine software optimization with custom hardware. As competition intensifies in AI-driven services, access to tailored computing resources is becoming a key differentiator for large-scale platforms.