Runway is expanding beyond AI video generation with the launch of a $10 million venture fund aimed at backing early-stage startups building across AI, media, and simulation technologies. The initiative reflects a broader push to develop an ecosystem around what the company describes as “video intelligence,” as per the report by TechCrunch.

The fund will target three areas: core AI infrastructure, application-layer companies building on foundation models, and new forms of media creation. Runway plans to invest up to $500,000 per startup at the pre-seed and seed stages, supporting companies that explore use cases beyond its own product roadmap.

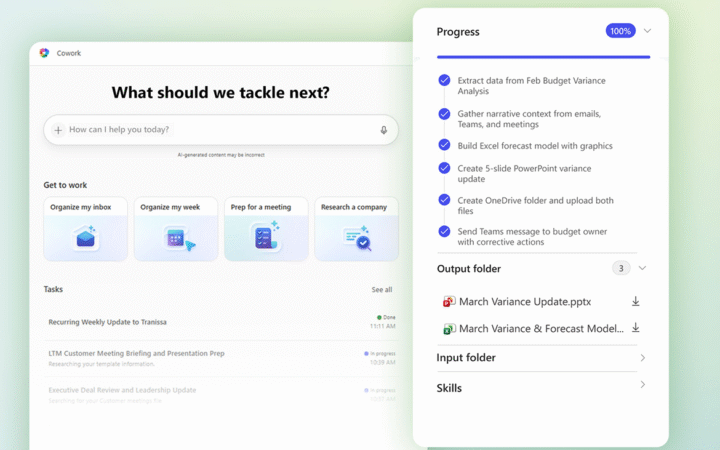

Alongside the fund, Runway introduced a Builders program offering startups free API credits and access to its real-time video agent technology. The program is designed to encourage experimentation with interactive AI systems, including virtual characters capable of real-time communication through video and audio.

Runway’s strategy builds on its development of “general world models,” which aim to combine video, audio, text, and image data into unified AI systems. These models are intended to enable immersive, interactive environments where users can engage with AI-generated content in real time.

The company has already backed startups such as LanceDB and Tamarind Bio, reflecting interest in both infrastructure and applied AI use cases. The move positions Runway alongside other AI firms, including OpenAI and Perplexity, that are launching venture funds to support ecosystem growth.

The initiative highlights a shift in the AI industry toward platform-driven strategies, where companies invest in external developers to expand applications and accelerate adoption of emerging multimodal technologies. It also builds on Runway’s recent collaboration with Nvidia to develop real-time AI video generation capable of producing high-definition output in under 100 milliseconds, underscoring rapid progress in interactive AI media.