Guide Labs introduced Steerling-8B, an 8-billion parameter large language model designed for interpretability. Unlike typical LLMs, every token generated by Steerling-8B can be traced to its training data, enabling developers to understand why the model produces certain outputs.

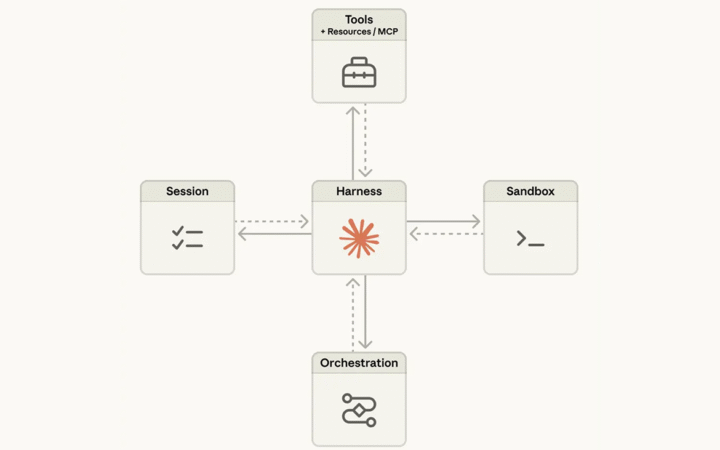

The architecture uses a concept layer that categorizes training data, allowing control over sensitive topics such as violence, drug use, or copyrighted content. Founder and CEO Julius Adebayo said the model also tracks “discovered concepts,” preserving emergent behaviors like reasoning about topics not explicitly in the training set, such as quantum computing.

Steerling-8B achieves roughly 90% of the performance of larger frontier models while using less training data. The company plans to scale the architecture, offering API and agentic access. Guide Labs emerged from Y Combinator and raised $9 million in seed funding from Initialized Capital in November 2024.

Adebayo emphasized that interpretable LLMs are critical for regulated industries, scientific research, and consumer applications, enabling safer and more accountable AI decisions. “As we’re going after these models that are going to be super intelligent, you don’t want something to be making decisions on your behalf that’s sort of mysterious to you,” he said.