Debate over the arrival of artificial general intelligence, or AGI, is intensifying as industry leaders offer increasingly divergent definitions of the milestone.

Nvidia CEO Jensen Huang recently said he believes AGI has already been achieved, a claim that underscores how flexible interpretations of the concept have become. His comments came during a discussion about the future of AI systems and their capabilities.

AGI is generally understood as a form of artificial intelligence that can perform tasks at a level comparable to human intelligence across a wide range of domains. However, there is no universally accepted definition, allowing companies and researchers to apply different benchmarks.

Competing Definitions of AGI

Huang has previously defined AGI as software capable of passing tests that approximate general human intelligence at a competitive level. Under that framework, he suggested in 2023 that such systems could emerge within five years.

In more recent remarks, Huang appeared to adopt a broader interpretation. When asked whether AI could build and run a billion-dollar company, he suggested that the threshold for AGI may already have been met.

His argument rests on a narrower interpretation of success, focusing on the ability of AI systems to generate significant economic value, even if only temporarily. This contrasts with more traditional definitions that emphasize sustained autonomy, reasoning, and adaptability across complex real-world scenarios.

The discussion reflects a broader trend in the AI industry, where the definition of AGI is often shaped by context, incentives, and technological progress.

Industry Pressures and Expectations

The debate comes at a time when leading AI companies are investing heavily in infrastructure, research, and product development. These efforts have driven rising costs and increased pressure to demonstrate tangible progress toward advanced capabilities.

AGI has become a central concept in this narrative, often used to frame long-term goals and justify large-scale investment. However, the lack of a clear definition makes it difficult to measure progress or compare claims across organizations.

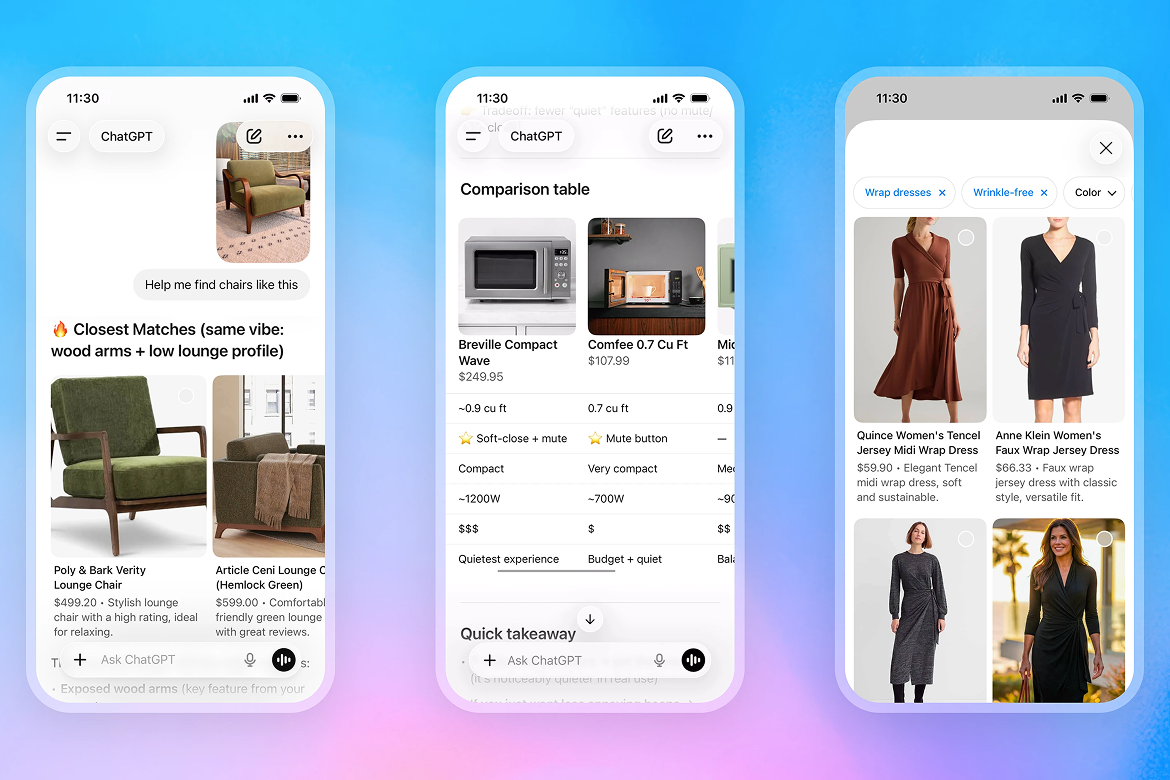

Some analysts argue that current AI systems, including agent-based tools capable of executing complex workflows, represent meaningful steps toward general intelligence. Others maintain that these systems remain specialized and dependent on human oversight.

The emergence of autonomous agents has added complexity to the discussion. These systems can perform multi-step tasks and interact with software environments, but they do not yet exhibit the full range of cognitive abilities associated with human intelligence.

As a result, the question of whether AGI has been achieved remains largely philosophical. The answer depends less on technological breakthroughs and more on how the concept itself is defined.

The ongoing debate highlights a key challenge for the AI industry: aligning expectations, definitions, and technical reality as development accelerates. Until a consensus emerges, claims about AGI are likely to remain contested, reflecting both genuine progress and differing interpretations of what constitutes general intelligence.